|

|

|

Newsletter: March 2013 | Vol 13, Issue 3

|

|

|

|

|

| Association Management Company Update | |

Dear Colleagues,

I am pleased to communicate some exciting news! Since July 2012, when Susan Kistler who has long been the Executive Director of AEA let us know she wanted to move on to other ventures in her life, we have been working to find a new association management company (AMC). After a careful search for and review of leading applicants, we have selected SmithBucklin Corporation, with offices in Chicago and Washington, DC, to manage our Association, and our contractual agreements with them are almost complete. We are confident that SmithBucklin will be instrumental in helping AEA move to the next level in serving members and expanding the influence of the evaluation profession in the U.S. and internationally.

SmithBucklin Corporation is the world's largest association management and professional services company, providing flexible, tailored full-service management and project-based services to more than 320 trade associations, professional societies, technology user groups, government institutes/agencies and other nonprofit organizations. Through an international partnership, SmithBucklin also provides global reach and services to organizations operating internationally.

"We're thrilled about our new partnership with AEA," says John Stroiber, SmithBucklin Executive Vice President and the Chief Executive of their Business Trade and Industry Practice. "We look forward to working with AEA's dedicated volunteer leaders to continue to build on the past successes of the organization. The new capabilities and resources now available to the Association will position AEA well for building future member value."

The selection of SmithBucklin came through the work of the Association Management Company/Executive Director Selection and Transition Task Force whose co-chairs, Mel Mark and Stewart Donaldson, worked with members Jody Fitzpatrick, John Gargani, Robin Miller, Ricardo Millett, and Brian Yates to recruit, screen and select association management companies appropriate for AEA. Board members then reviewed finalists, interviewed their representatives, and reached consensus on SmithBucklin as the company to lead us in the future. The Board strongly believes that the partnership with SmithBucklin is the right choice for AEA and will allow the Association to continue to serve our current and future members and the profession at the highest level possible.

The transition from Kistler Consulting to SmithBucklin has begun and will be completed by June. While quite a few SmithBucklin professionals will be assigned to AEA, we will have the opportunity to work with SmithBucklin in April to select an Executive Director who will lead that work. We will keep you posted on our progress. Should you have additional questions or concerns, please do not hesitate to reach out to me directly at jody.fitzpatrick@udenver.edu. And, of course, if you have questions about the conference, just contact AEA at its usual e-mail address or phone number.

Sincerely,

Jody

Jody Fitzpatrick

AEA 2013 President |

|

|

|

|

| AEA's Values - Walking the Talk with David Bernstein | |

Are you familiar with AEA's values statement? What do these values mean to you in your service to AEA and in your own professional work? Each month, we'll be asking a member of the AEA community to contribute her or his own reflections on the Association's values.

AEA's Values Statement

The American Evaluation Association values excellence in evaluation practice, utilization of evaluation findings, and inclusion and diversity in the evaluation community.

i. We value high quality, ethically defensible, culturally responsive evaluation practices that lead to effective and humane organizations and ultimately to the enhancement of the public good.

ii. We value high quality, ethically defensible, culturally responsive evaluation practices that contribute to decision-making processes, program improvement, and policy formulation.

iii. We value a global and international evaluation community and understanding of evaluation practices.

iv. We value the continual development of evaluation professionals and the development of evaluators from under-represented groups.

v. We value inclusiveness and diversity, welcoming members at any point in their career, from any context, and representing a range of thought and approaches.

vi. We value efficient, effective, responsive, transparent, and socially responsible association operations.

I'm David J. Bernstein, and I am a Senior Study Director at Westat. I was an inaugural member of AEA in 1986. I am Past Chair of the Nonprofits and Foundations TIG, and was Founding Chair of the Government Evaluation TIG and the Graduate Students and New Evaluators TIG. I served on AEA task forces on Educational Accountability and Electronic Communication, was Local Arrangements Co-Chair for the 2002 AEA Conference, and I'm an aea365 blogger. Prior to Westat I worked for the U.S. Department of Health and Human Services, the American National Red Cross, and Montgomery County, Maryland. Although my work was not always exclusively evaluation related, my energy and enthusiasm has never strayed far from my chosen profession. I'm David J. Bernstein, and I am a Senior Study Director at Westat. I was an inaugural member of AEA in 1986. I am Past Chair of the Nonprofits and Foundations TIG, and was Founding Chair of the Government Evaluation TIG and the Graduate Students and New Evaluators TIG. I served on AEA task forces on Educational Accountability and Electronic Communication, was Local Arrangements Co-Chair for the 2002 AEA Conference, and I'm an aea365 blogger. Prior to Westat I worked for the U.S. Department of Health and Human Services, the American National Red Cross, and Montgomery County, Maryland. Although my work was not always exclusively evaluation related, my energy and enthusiasm has never strayed far from my chosen profession.

I have been an advocate of the AEA Guiding Principles for Evaluators since they were developed, and frequently use them to guide my evaluation practice and educate non-evaluators on the value of evaluation and the values of evaluators. Consistent with the AEA values, I am a proponent of utilization of evaluation findings, even though it is challenging in highly charged and resource constrained political environments. I am interested in evaluation sponsors and stakeholders, as evidenced by the theme of New Directions in Program Evaluation Volume 95 that I co-edited with Rakesh Mohan and Maria Whitsett. While I am all for methodological rigor, what value is our investment in evaluation if it does not also contribute to decision-making, program improvement, and policy formulation?

AEA's emphasis on continual development of evaluation professionals and the development of evaluators from under-represented groups is one of the reasons why AEA is my professional association. The opportunity to mingle with peers from all over, including leaders of our field, is the reason I have been actively involved for 30 years with AEA and its predecessors.

AEA's value on inclusiveness and diversity as well as efficient, effective, responsive, transparent, and socially responsible association operations are values that Valerie Caracelli and I share as Co-Chairs of the 2013 AEA Conference Local Arrangements Working Group (LAWG). Many volunteers are helping us plan locally-based activities to support Evaluation 2013 conference attendees. We are working on activities related to diversity, social justice, international attendees, local logistics, and communications. We will even be supporting conference attendees who want to visit their Congress Person/Congressional Staffer! Valerie and I hope you will attend the conference in Washington, DC in October, and look forward to seeing you.

Go to the Evaluation 2013 Website

|

| Policy Watch - EPTF Update & Ways to Get Involved | |

From Cheryl Oros, Consultant to the Evaluation Policy Task Force

With Spring comes renewal and the Evaluation Policy Task Force (EPTF) is updating its work plan, filling two openings and beginning a system of three-year terms and rotation off the EPTF for its members. Although this year's application process is complete, you may want to consider working with the EPTF in the future as part of your contribution to the field of evaluation in your career planning, or also getting involved in current efforts. The following brief introduction might help.

AEA instituted the EPTF to develop an ongoing capability to influence evaluation policies critical to practice, such as defining evaluation and its requirements, methods, implementation, resources and budgets needed. The EPTF is now and has been working with congressional committees, the White House, and agencies to promote sound evaluation policies in the federal government. Its projects have included: informing the Office of Management and Budget's guidance on evaluation; helping draft appropriations language for the President's Emergency Plan for AIDS Relief (PEPFAR) program; and assisting the United States Agency for International Development and the State Department in setting policies to expand evaluation capacity. Learn more about the EPTF's work and review policy guidelines in The Evaluation Roadmap for a More Effective Government.

The EPTF is comprised of: Eleanor Chelimsky, former President of AEA and Director of the Government Accountability Office (GAO) Evaluation Division; Katherine Dawes, Director of the Environmental Protection Agency Evaluation Division; Patrick Grasso, Social Impact, formerly with the World Bank Independent Evaluation Group and GAO; George Grob, Deputy Inspector General, Federal Housing Finance Agency and former EPTF Consultant; Susan Kistler, AEA Executive Director; Mel Mark, Head of Psychology at the Pennsylvania State University and former President of AEA; Beverly Parsons, AEA President-elect and Executive Director of InSites; and Stephanie Shipman, Assistant Director, Center for Evaluation Methods and Issues, GAO and founder of the Federal Evaluators Group. Past EPTF members include former AEA presidents Leslie Cooksy, Jennifer Greene, and William Trochim.

I am the EPTF Consultant, an independent consultant and retired director of federal evaluation offices who served on inter and intra departmental evaluation policy committees, including a Committee of Science working group which created Science of Science Policy: A Federal Research Roadmap. I also worked at GAO, as well as research firms and Georgetown University conducting nationwide evaluations of federal programs and teaching evaluation.

There are a variety of ways you can become involved, e.g., visiting your congressional member to support evaluation policy during the Annual Conference in Washington, DC this year; contacting the EPTF at evaluationpolicy@eval.org with suggestions for policy contacts at the federal and state level; and signing up for the EPTF Discussion List.

Go to the EPTF Webpage |

| Face of AEA - Meet Benoît Gauthier | |

AEA's more than 7,800 members worldwide represent a range of backgrounds, specialties and interest areas. Join us as we profile a different member each month via a short Question and Answer exchange. This month's profile spotlights Benoît Gauthier, who shares membership with the Canadian Evaluation Society (CES) and serves as a vital link between AEA and CES.

Name: Benoît Gauthier

Affiliation: Circum Network Inc.

Degrees: MA (Political Science), MA (Public Administration)

Years in the Evaluation Field: 29

Joined AEA: 2003

AEA Leadership Includes: As webmaster for the Canadian Evaluation Society (CES), have been in regular communications with AEA since serving as webmaster for the 2005 joint CES-AEA conference.

Why do you belong to AEA?

"The two key attractors for me are the excellent publications and the very active network of evaluation professionals. Whereas CES was created by practitioners of evaluation who were active in government and in non-profits, AEA has academic roots. This key difference in their original impetus extends to today: CES is generally practice-focused and AEA leans more toward reflection on evaluation. The two associations are highly complementary."

Why do you choose to work in the field of evaluation?

"In 1983, I was a purchaser and analyst of survey research, and someone mentioned that a certain Canadian government organization was hiring for positions in what was called program evaluation. I read some Canadian government publications in preparation for an interview and, voilà, I became an evaluator. That said, I truly adopted evaluation as my profession very rapidly thereafter. My interest in evaluation came from the opportunity it gave me to inform real life (and often important) decisions through rigorous research."

What's the most memorable or meaningful evaluation that you have been a part of - and why?

"I was involved in several interesting evaluations but one was particularly instructive. We were tasked with evaluating the performance of immigration officers identifying individuals who should not be admitted into Canada without special documentation. We had devised quantitative approaches to measure the immigration officers' performance that provided a solid foundation for the evaluation but offered no insight into the reasons for the observed performance. Another module of the evaluation involved sending actors to border posts and airports to play the roles of American travelers whose situation should call for an immigration interview. Everything went fine until word got out. We used this unplanned event as an interrupted time series and showed that immigration officers were two to three times more effective after knowing that they were observed than before. That allowed the evaluation to identify some of the weaknesses in the system - which were due not so much to information systems as they were to human resources. This evaluation highlighted for me the importance of a balance in qualitative and quantitative evidence in evaluation and the crucial aspect of seizing opportunities for knowledge wherever they present themselves."

What advice would you give to those new to the field?

Be curious (evaluators face new situations, topics and challenges every day), open (evaluators learn so much from other fields of enquiry), critical (evaluators must question every assumption; that's our trade), humble (we strive for rigour but our tools have limits, like all tools of all trades), but confident (evaluators offer a unique, hopefully independent perspective).

If you know someone who represents The Face of AEA, send recommendations to AEA's Communications Director, Gwen Newman, at gwen@eval.org. |

| eLearning Update - BetterEvaluation Coffee Break Series | |

From Stephanie Evergreen, AEA's eLearning Initiatives Director

Clear your calendar! In May, we will double up on our Coffee Break webinars with two webinars per week. Why are we going crazy? Because we'll be hosting an 8-part webinar series with BetterEvaluation and EvalPartners.

Patricia Rogers, BetterEvaluation's project director, notes, "Since the site went live in October 2012 we've had visitors from over 180 different countries, and an increasing number of user contributions from different countries. The webinar series will make more information readily accessible for evaluators, evaluation managers and users wherever they are located."

In pursuit of AEA Board priorities around outreach to international colleagues, this Coffee Break series will focus on introducing and explaining BetterEvaluation's rainbow framework. The framework organizes every task involved in an evaluation into 7 basic steps. Each webinar will focus on one step (and one will be an overview) and the tasks involved in carrying out that step. Expert presenters will point out the range of options evaluators have in these tasks and the resources listed on BetterEvaluation's site than can be of assistance.

Adds Rogers: "We're delighted to be partnering with AEA on this initiative. AEA has a long standing commitment to a global and international evaluation community and understanding of evaluation practices, and a sizable proportion of international members and members working in international development. BetterEvaluation is also supporting learning across the global evaluation community, and is a partner in the international EvalPartners initiative."

Best of all? The webinars in the BetterEvaluation series are open to the public. Tell your friends and colleagues who are not AEA members. Everyone can attend! Click below to register (you must separately register for each webinar you wish to attend). Best of all? The webinars in the BetterEvaluation series are open to the public. Tell your friends and colleagues who are not AEA members. Everyone can attend! Click below to register (you must separately register for each webinar you wish to attend).

Overview

Step 1 Define

Step 2 Frame

Step 3 Describe

Step 4 Understand Causes

Step 5 Synthesize

Step 6 Report & Support Use

Step 7 Manage

Don't forget to check out the other webinars in our lineup.

And look herefor more details on our eStudy courses. We're excited to launch Intermediate Consulting Skills and bring back Empowerment Evaluation and Alternative Evaluation Reporting Techniques. Registration deadlines are quickly approaching!

|

| International Collobaration Update | |

As a member of the International Organization for Cooperation of Evaluation (IOCE), it is important for AEA to actively and strategically engage with evaluators globally. In January, the AEA board voted to affirm international collaboration as a priority in FY14 and in February set a budgetary amount. "For new efforts, AEA should contribute to society through collaborating with international evaluation organizations that align with its values towards furtherance of the evaluation profession at a cost of no more than $40,000 across FY14 and FY15." Therefore, this series will feature the international experiences and efforts of AEA leaders to implement recommendations and exemplify current agreements with international partners. For example, AEA's 2012 President Rodney Hopson participated in two significant international forums. The outcomes of these forums are highlighted below.

Thailand

The EvalPartners International Forum on Civil Society's Evaluation Capacities was held in Chiang Mai (December 3-6) and was co-sponsored by UNICEF and IOCE. Over 80 participants attended the forum which featured an historic gathering of evaluation associations, the signing of the Chiang Mai Declaration, and the creation of several working works to carry out identified priorities. Hopson expressed, "The key involvement in EvalPartners by U.S. based AEA members Tessie Catsambas (AEA IOCE representative) and Jim Rugh (EvalPartners Coordinator) was evident at the forum." The EvalPartners International Forum on Civil Society's Evaluation Capacities was held in Chiang Mai (December 3-6) and was co-sponsored by UNICEF and IOCE. Over 80 participants attended the forum which featured an historic gathering of evaluation associations, the signing of the Chiang Mai Declaration, and the creation of several working works to carry out identified priorities. Hopson expressed, "The key involvement in EvalPartners by U.S. based AEA members Tessie Catsambas (AEA IOCE representative) and Jim Rugh (EvalPartners Coordinator) was evident at the forum."

Saudi Arabia

The Third International Exhibition and Forum on Education (IEFE) was held in Riyadh (February 18-22) and was hosted by the Saudi Arabian Ministry of Education. With evaluation as this year's theme, the forum focused on providing substantial opportunities for international businesses in the education sector to create partnerships and connect with decision makers from Saudi Arabian and Gulf Cooperation Council government bodies overseeing education developments. In the midst of experts from over 20 different countries and over 40,000 attendees, Hopson gave a lecture on "Repositioning Evaluation through Culturally Responsive Evaluation in the 21st Century" and a workshop on "Building Opportunities for International Engagement with the American Evaluation Association." The Third International Exhibition and Forum on Education (IEFE) was held in Riyadh (February 18-22) and was hosted by the Saudi Arabian Ministry of Education. With evaluation as this year's theme, the forum focused on providing substantial opportunities for international businesses in the education sector to create partnerships and connect with decision makers from Saudi Arabian and Gulf Cooperation Council government bodies overseeing education developments. In the midst of experts from over 20 different countries and over 40,000 attendees, Hopson gave a lecture on "Repositioning Evaluation through Culturally Responsive Evaluation in the 21st Century" and a workshop on "Building Opportunities for International Engagement with the American Evaluation Association."

AEA member Eqbal Darandari, Vice Dean for Development Deanship, Vice Dean Assistant for Quality and Development, and Project Management Office Manager at KingSaud University, expressed support. "AEA should play an international role and identify what it can achieve that through its international members in each country. A plan needs to be developed on how to spread AEA's impact and interaction on a global level and to assess needs that should be considered regarding the different cultures in terms of the practice of evaluation."

AEA member Tahira Hoke also attended the forum. Hoke, Director of the Academic Assessment and Planning Center at Prince Sultan University and a Claremont Graduate University Alumna, adds: "I always hoped that the American Evaluation Association would one day increase its international presence to support AEA members, increase awareness of the field of evaluation beyond American borders, and promote its significant contributions to organizations and institutions around the world. I believe Hopson's and Darandari's honorable efforts will increase AEA's international membership and improve the quality of evaluation efforts in the Middle East and Africa." |

| Diversity - Welcome to the Diversity TIGs Working Group! | |

From Karen Anderson, AEA's Diversity Coordinator Intern

The Diversity TIGs Working Group (DTWG) was developed to communicate to the membership the importance of recognizing diversity in evaluation and providing clarity to the terms diversity and culture and what that means in different contexts. The DTWG will lead discussions to promote ongoing and meaningful conversation as it relates to diversity in evaluation, and will work closely with the AEA Public Statement on Cultural Competence in Evaluation Dissemination Working Group (CCWG) to promote culturally competent evaluation. The Diversity TIGs Working Group (DTWG) was developed to communicate to the membership the importance of recognizing diversity in evaluation and providing clarity to the terms diversity and culture and what that means in different contexts. The DTWG will lead discussions to promote ongoing and meaningful conversation as it relates to diversity in evaluation, and will work closely with the AEA Public Statement on Cultural Competence in Evaluation Dissemination Working Group (CCWG) to promote culturally competent evaluation.

The following excerpt from the AEA Public Statement on Cultural Competence in Evaluation speaks to the need to attend to diversity in evaluation practice: "Attempts to categorize people often collapse identity into cultural groupings that may not accurately represent the true diversity that exists. For example, an evaluator who is not aware of values placed on different modes of communication within the deaf and hard-of-hearing communities (e.g., the use of sign language, lip reading, or personal assistive listening devices) can miss important individual differences regarding ways of interacting."

Be on the lookout for updates and new resources from the DTWG! The DTWG will be reporting directly to the membership through such avenues as the aea365 daily blog, the monthly newsletter, conference sessions, and journal articles.

The Working Group TIGs include:

If you have any questions for members of the DTWG, please send them to: karen@eval.org. |

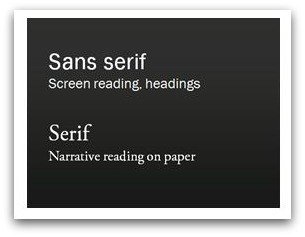

| Potent Presentations - Advice on the Fonts in Your Evaluation Slideshow | |

From Stephanie Evergreen, Potent Presentations Initiative Coordinator

If we are presenting our evaluation findings and the audience can't read the slides, we've defeated the purpose and gotten in the way of supporting evaluation use.

This month, we'll talk a little about slide design.

Generally, p2i advocates that slides should have very little text. When possible, what text there is should be reserved for keywords, precise definitions, elaboration of acronyms, that sort of thing. Most of the content should instead come from you, the speaker. With what text there is on a slide, pay attention to these two points from our Slide Design Guidelines:

- Font is at least size 24 point.

- View your slides in slidesorter mode. If you can't read the words, neither will your back row audience members.

Of course, the size of the font should depend on the size of the room where you'll be presenting. The larger the room (especially if it doesn't have multiple projection screens), the larger your font should be.

- Fonts are easily read as they appear on screen.

- Use sans serif fonts (like Calibri, Century Gothic, or Trebuchet). Look for a font with thick, even lines. Thin lines, like in many serif fonts (Times New Roman, Georgia, or Baskerville), disappear when projected - but they are good for reading on handouts.

If you choose to work from one of the default templates available in PowerPoint, double check its font choices - many are serif. It may seem like a small change, but one quick adjustment there will go a long way in making your evaluation work more legible for your audience.

We talked about 9 other differences that make a difference and you can browse them all in the Design Demo Slides PowerPoint file located on our homepage. |

| Nominations for AEA's 2013 Awards Due Friday, June 7 | |

Nominations are now being accepted for the seven American Evaluation Association Awards. Please take this opportunity to acknowledge outstanding colleagues and outstanding work. Through identifying those who exemplify the very best in the field, we honor the practitioner and advance the discipline. Aside from the Ingle Award, all awards are open to non-AEA members as a way to recognize contributions to the field. Self-nominations are accepted, but should also be supported by a recommendation from an AEA member. Nominations are now being accepted for the seven American Evaluation Association Awards. Please take this opportunity to acknowledge outstanding colleagues and outstanding work. Through identifying those who exemplify the very best in the field, we honor the practitioner and advance the discipline. Aside from the Ingle Award, all awards are open to non-AEA members as a way to recognize contributions to the field. Self-nominations are accepted, but should also be supported by a recommendation from an AEA member.

All nominations must be completed and received in the AEA office by the deadline, Friday, June 7, 2013 in order to be considered.

AEA awards recipients will be recognized at Evaluation 2013, to be held October 14-19 in Washington, DC. Recipients are announced in the American Journal of Evaluation and each winner will receive a complimentary year of membership to AEA.

Learn more online at: http://www.eval.org/aboutus/awards.asp

|

| Interactive Evaluation Practice |

AEA members Jean King and Laurie Stevahn are co-authors of Interactive Evaluation Practice: Mastering the Interpersonal Dynamics of Program Evaluation, a new book published by SAGE.

From the Publisher's Site:

"You're about to start your first evaluation project. Where do you begin? Or you're a practicing evaluator faced with a challenging situation. How do you proceed? How do you handle the interactive components and processes inherent in evaluation practice? Use Interactive Evaluation Practice to bridge the gap between the theory of evaluation and its practice. Taking an applied approach, this book provides readers with specific interactive skills needed in different evaluation settings and contexts. The authors illustrate multiple options for developing skills and choosing strategies, systematically highlighting the evaluator's three roles as decision maker, actor, and reflective practitioner. Case studies and interactive examples stimulate thinking about how to apply interactive skills across a variety of evaluation situations."

From the Authors:

"Although technical aspects of evaluation practice often take center stage, over the years we realized that, in fact, successful evaluation practice relies on effective interpersonal interaction. Informed by research, our goal was to create an accessible, hands-on guidebook to help evaluators in the practical work of engaging others constructively when conducting evaluations for different purposes across diverse contexts. We wanted our book to be incredibly serviceable, including exhibits and templates for repeated use and examples from various types of practice and content areas. Part I provides conceptual grounding for IEP, including three frameworks for envisioning and planning studies. Part II presents skills, strategies, worksheets, and sample materials adaptable across a wide range of evaluation approaches. Part III offers teaching cases with exercises useful in university or professional development training settings. Each chapter invites evaluators to think critically and creatively about the interpersonal dimensions of their own practice."

About the Authors:

Jean A. King is a professor in the Department of Organizational Leadership, Policy, and Development at the University of Minnesota. King founded the Minnesota Evaluation Studies Institute (MESI) in 1996 and currently serves as its Director. With over 30 years' experience teaching and conducting evaluations, King has received numerous awards for her work, including AEA's Myrdal Award for Evaluation Practice and the Ingle Award for Extraordinary Service, three teaching awards, and three community service awards.

Laurie Stevahn is Professor of Education at Seattle University and Director of the Educational Leadership doctoral program. She teaches graduate courses in research and evaluation, curriculum and instruction, and social justice in professional practice. Special areas of expertise include cooperative learning and conflict resolution, organization development and systemic change, interactive models of teaching and assessment, and essential competencies for program evaluators.

Go to the Publisher's Site |

|

Evaluation Humor - Learning from the Past | |

Every issue, we include a light-hearted feature designed to generate a laugh. Below is an illustration that appeared in the February 2013 issue of the Environmental Program Evaluation (EPE) TIG's newsletter.

If you have an illustration or graphic you'd like to share, feel free to forward it to Newsletter Editor Gwen Newman at gwen@eval.org. |

| New Member Referrals & Kudos - You Are the Heart and Soul of AEA! | | Last January, we began asking as a part of the AEA new member application how each person heard about the Association. It's no surprise that the most frequently offered response is from friends or colleagues. You, our wonderful members, are the heart and soul of AEA and we can't thank you enough for spreading the word.

Thank you to those whose actions encouraged others to join AEA in February. The following people were listed explicitly on new member application forms:

Tarek Azzam * Penny Burge * Heidi Chorzempa * Jim Corrigan * Jane Dowling * Christie Drew * Kimberly Farris * Michelle Granner * Kari Greene * Leah Hakkola * Jeanette Harder * Indiana Evaluation Association * Kathleen Kelsey * Rebecca Koladycz * Johann Louw * Leah Neubauer * Donna Neusch * New Mexico Evaluators * Debra Rog * Cheyanne Scharbatke-Church * Audrey Schuh-Moore * David Shellard * Iris Smith * Emily Spence-Almaguer * Javier Toro * Jan Upton * Wellington Consulting Group * Alice Willard * Mike Yamashita |

|

New Jobs & RFPs from AEA's Career Center

| |

What's new this month in the AEA Online Career Center? The following positions have been added recently:

- Senior Research Manager at Strategic Data Project (Cambridge, MA, USA)

- Senior Evaluation Specialist at Mott MacDonald (Washington, DC, USA)

- Evaluation Director at United Teen Equality Center (Lowell, MA, USA)

- Director, Evidence-Based Practice at The Annie E. Casey Foundation (Baltimore, MD, USA)

- Manager, Strategic Planning and International Relations at Faculty of Medicine, University of Toronto (Toronto, ON, CANADA)

- Vice President, Education Research at IMPAQ International (Columbia, MD, USA)

- Evaluation & Research Associate at Spark Policy Institute (Denver, CO, USA)

- Evaluation Specialist II at Public Health Institute's Network for a Healthy California (Sacramento, CA, USA)

- Evaluator for NSF Microscopy Grant at Hagerstown Community College (Hagerstown, MD, USA)

- Program Analyst, Housing Choice Voucher Program at Seattle Housing Authority (Seattle, WA, USA)

Descriptions for each of these positions, and many others, are available in AEA's Online Career Center. According to Google analytics, the Career Center received approximately 3,900 unique visitors over the last 30 days. Job hunting? The Career Center is an outstanding resource for posting your resume or position, or for finding your next employer, contractor or employee. You can also sign up to receive notifications of new position postings via email or RSS feed.

|

| About Us | | The American Evaluation Association is an international professional association of evaluators devoted to the application and exploration of evaluation in all its forms.

The American Evaluation Association's mission is to:

- Improve evaluation practices and methods

- Increase evaluation use

- Promote evaluation as a profession and

- Support the contribution of evaluation to the generation of theory and knowledge about effective human action.

phone: 1-508-748-3326 or 1-888-232-2275

|

|

|

|

|

|

|

|

|