|

|

| Newsletter: October 2011 | Vol 11, Issue 10

|

|

|

|

|

|

Dear AEA Colleagues,

I write this greeting on my return from the annual conference of SVUF, the Svenska utvarderingsforeningen, aka the Swedish Evaluation Society. SVUF 2011, sited in the magically beautiful city of Stockholm, offered continued engagement with a theme central to our work as evaluators - that of evaluation quality. As you likely recall, Leslie Cooksy advanced this theme for AEA's Evaluation 2010.

At the SVUF conference, I had the wonderful pleasure of reconnecting with old friends, and the privilege of establishing new relationships with potentially new colleagues and friends. This social-relational and networking dimension of professional conferences is well recognized.

Less well recognized is the social-relational dimension of evaluation itself, and its connection to evaluation quality. Attention to the social fabric of evaluation is anchored in the stance that evaluation is fundamentally a social practice, that is, a practice enacted in interaction with others in particular places and times. Because these interactions matter to the integrity of the evaluation's implementation and to the meaningfulness of the evaluation's results, the social-relational dimension of evaluation also matters to its quality.[1] Moreover, these relational facets of evaluation are a key site for engaging with the values that are integral to our craft, as relationships importantly shape an evaluation's purpose and audience, methodology, and broader societal role (the three locations for evaluation's values that I have addressed in earlier posts). And so values and quality are connected in evaluation, through the relational fabric of our practice.

Within this set of inter-connections - evaluation relationships with values, and both with evaluation quality - let us remember the plurality of understandings of evaluation quality, and thus of salient values and thus of the character of evaluative relationships, that constitute our community. We are graced with a powerful diversity of standpoints on these central evaluative concerns, and we gather strength from this diversity. We also gather strength from our fundamental respect, one of the other, and our commitment to dialogue and deliberation rather than conflict and confrontation. These are values we share, I believe.

At our annual conference in Anaheim, and in our own workaday activities, may we all celebrate our values pluralism, gain strength from our valued relationships, and reach out to make one new acquaintance and thereby strengthen our community's bonds with one another and thereby our community.

With anticipation,

Jennifer

Jennifer Greene

AEA President 2011

[1] Helen Simons and I presented a paper offering this argument at SVUF this year.

|

|

|

|

|

Policy Watch - Getting Through to Policy Makers

|

From George Grob, Consultant to the Evaluation Policy Task Force

Conventional wisdom holds that policy makers pay no attention to evaluations. The purported explanation is that decision makers are not open to the careful, non-political advice of professional analysts like evaluators. As a result, good evaluations sit endlessly gathering dust on book shelves, ignored and forgotten.

However, the truth is that hundreds of evaluations are used by government decision makers every year. These include studies done by the Government Accountability Office, Inspectors General, Federal agencies, respected foundations, and evaluation centers at prominent universities. I have seen, firsthand, evaluation-inspired policy or management changes in fields like foster care, Head Start, nursing homes, hospice care, prescription drug pricing, and maternal and child health services, to name just a few. Of course, this doesn't always happen. It is therefore salutary to discern what evaluations are used, which are not, and why. Here is my own analysis of policy relevant evaluations.

I would like to begin with where not to begin. Many evaluators believe that it is their methodologies that most directly affect evaluation use. No doubt methods matter. However, other more fundamental factors come into play. Broadly speaking, evaluations that influence public policy are ones that decision makers are aware of; are interested in; contain practical, affordable recommendations; are easy to read; and are timely. Timeliness means that evaluations are available when decisions are made, usually in connection with legislative, regulatory, or budget processes.

Evaluations are also more likely to be used if decision makers request them. This can happen within the policy making processes named above, and when government agencies issue requests for proposals to obtain outside experts to perform evaluation studies. Bidding on government contracts is particularly intricate and burdensome. Learning the procurement ropes and switches is difficult but de rigueur. However, once mastered, they are empowering.

Like the music business, breaking into policy circles is difficult to do. However, federal and state agencies, large consulting firms, universities, and foundations usually have public affairs, congressional liaison offices, and procurement experts. They do not want evaluators going solo but will appreciate knowing about important evaluations that are in the works. My own experience has been that these "gatekeepers" are remarkably good at what they do and are open to good ideas. Starting with the appropriate support staffs is generally very much a winning situation for everyone involved, since all will take great pleasure in the success that comes from meaningful evaluations that can make a difference.

Bottom line, odds of influencing government decision makers are low without a command of policy and procurement processes. They are best learned by making friends not so much with decision makers (although that can help), but with agency and institutional gatekeepers.

To influence policy makers, new insights on relevant issues, practical and affordable recommendations, easy to read reports, timelines, and personal connections through gatekeepers are what matter.

Go to AEA's Evaluation Policy Task Force website page

|

Evaluation 2011 - Values and Valuing in Evaluation

| |

The theme of AEA's Evaluation 2011 conference is "Values and Valuing in Evaluation." Presidential Strand Co-chairs Gail Barrington and Melvin Hall have assembled a thought-provoking lineup that begins with an opening plenary offered by AEA's 2011 President Jennifer Greene, who will underscore the importance of taking responsibility for the values dimensions of our craft. Our invited guests, representing experts in other fields who also face challenges of engaging meaningfully and ethically with values in their work, will further flesh out the theme. The theme of AEA's Evaluation 2011 conference is "Values and Valuing in Evaluation." Presidential Strand Co-chairs Gail Barrington and Melvin Hall have assembled a thought-provoking lineup that begins with an opening plenary offered by AEA's 2011 President Jennifer Greene, who will underscore the importance of taking responsibility for the values dimensions of our craft. Our invited guests, representing experts in other fields who also face challenges of engaging meaningfully and ethically with values in their work, will further flesh out the theme.

Thursday's plenary will feature journalist Kim Barker, who spent five years covering Pakistan and Afghanistan for the Chicago Tribune and who has authored The Taliban Shuffle, sharing her perspectives on the culture and politics of these lands and on the importance of women correspondents in war zones. On Friday, we will hear from news commentator Leonard Pitts, Jr., who won a Pulitzer Prize for commentary in 2004 and who has also authored a novel, Before I Forget.

Our Presidential Strand sessions feature three additional invited guests. Melanie Barwick is a specialist in child and youth mental health systems and knowledge translation, based in Toronto, Canada. Stella Ting-Toomey brings expertise in intercultural communication theory and practice and joins us from her home at Cal State Fullerton. Diane Saphiere brings expertise also in communication across cultures from her faculty position at the Summer Institute for Intercultural Communication.

And on Saturday, we will hear some ethnographic commentary about our time together from esteemed colleagues Eleanor Chelimsky, Karen Kirkhart, and a third observer, followed by the now-traditional closing champagne toast.

In all, there are more than 700 additional sessions that include paper sessions and panels, think tanks, demonstrations, roundtables and posters, and a welcoming of performance-based sessions this year in tandem with the theme of values. Please partake of all, sampling the familiar and the new. Please reconnect with old friends and introduce yourself to at least one new colleague during the conference, as these connections support our valuing of inclusiveness and community.

We hope to see you all in Anaheim! If there, be sure to attend our annual awards luncheon where we will honor four individuals and three groups for outstanding work. Check out our official announcement, then read future newsletters to learn more.

Go to the 2011 Awards Announcement

|

TechTalk - Uploading to the AEA eLibrary

| |

From LaMarcus Bolton, AEA Technology Director

Did you know that there are over 800 entries in the AEA Public eLibrary, a number that will increase significantly over the next couple of weeks? Did you know that there are over 800 entries in the AEA Public eLibrary, a number that will increase significantly over the next couple of weeks?

Many of us will be gathering next week in Anaheim for Evaluation 2011 where we'll learn from over 1000 speakers. Conference speakers are strongly encouraged to upload their materials to the eLibrary to facilitate distribution and to decrease the conference'senvironmental impact through limiting the printing required for the event.

Whether you are attending or not, I encourage you to visit the eLibrary over the coming weeks to look at the ever-expanding selection of materials available there. Please note that you also do not need to be a conference presenter to share materials in the eLibary. It is meant for peer-to-peer exchange of your own handouts, instruments, papers, etc.

If you are a presenter, or any member, who has yet to upload to the eLibrary, check out the following short 4-minute how-to video to walk you through the process.

|

New Data Visualization and Reporting TIG Offers Sessions at Evaluation 2011

| |

Today, we're talking with Stuart Henderson, one of the leaders for the new Data Visualization and Reporting Topical Interest Group (DVR TIG). This will be the first time that the DVR TIG has sessions on the conference program, and they're trying a few things that we haven't seen before at AEA. Stuart, tell us what's new that you're bringing to the conference this year.

"Since this is our first year, we wanted to come up with some creative ways to introduce our TIG as well as engage and provide a service to all AEA members. To accomplish this, we are introducing two new events: an IgniteAEA session and a slide clinic."

IgniteAEA

Ignite, which has the motto "enlighten us, but make it quick," is a nationwide project that promotes short, accessible talks (sometimes called lightning talks) about a variety of topics. The Ignite format is simple: 5 minute presentations that consists of 20 slides that auto-advance every 15 seconds.

We thought that adapting the Ignite format for AEA would be a perfect way to introduce alternatives to traditional presentations as well as to provide an entertaining and engaging way to present information about evaluation and data visualization. We think presenting or listening to Ignite talks can help us as evaluators begin to think outside the box about how we deliver information in presentations. Plus, we thought it would be a unique and fun way to help people become familiar with our TIG and what it can offer. If you want more information about the event or to get an idea of what Ignite sessions are like there is an IgniteAEA website or a general Ignite website.

The session, scheduled for Thursday, November 3 from 7:15 to 8:45 pm, will be held in California Ballroom Section B, and all are welcome.

Slide Clinic

The second event we are sponsoring is a slide clinic on Tuesday, November 1, from 6:00-9:00 pm in the Laguna Room. It is going to be kind of a slideshow triage. All AEA presenters can bring their conference slides to the clinic and get individualized expert advice on layout, design, and aesthetics. The clinic is a way to promote our TIG's goal of helping evaluators make their data more clear, so that it more useful and usable for audiences.

In addition to the two new events described above, we will be having a variety of traditional papers, panels, and sessions that explore new ways to present, report, and refine data. The range of events is a way for our DVR TIG to share our enthusiasm about evaluation reporting and data visualization. We invite everyone to attend the IgniteAEA session, the slide clinic, and our sponsored sessions.

|

| AEA's Got Talent! |

In Celebration of AEA's 25th!

In honor of AEA's 25th birthday, we will hold an AEA Talent Show as part of the annual conference on Thursday evening, November 3, from 7:15-9:30 pm, at the Anaheim Hilton! Join us in the Avalon room for a fun program of solo and group singing, dance performance, and comedy! We will also be inviting audience participation, both spontaneous and planned. If you would like to play an instrument (or join in with others), sing, dance, or entertain by displaying another talent, please bring your instrument or props, and join in the fun. Just email Tessie Catsambas to coordinate. |

Meet George Julnes - Incoming Board Member

| |

In our last issue, we promised a quick introduction of our three incoming Board members as well as the 2013 President. We'll spotlight each individually and thank them for their commitment to service.

George Julnes is a Professor of Public and International Affairs at the University of Baltimore and has been a member of AEA since 1980. He serves on the editorial boards of Evaluation and Program Planning and New Directions for Evaluation and consults with federal agencies on policies in support of quality evaluation methods, including with the U.S. Department of Education and the National Science Foundation. He has chaired and served as Program Chair of the Quantitative Methods TIG for over a decade. George Julnes is a Professor of Public and International Affairs at the University of Baltimore and has been a member of AEA since 1980. He serves on the editorial boards of Evaluation and Program Planning and New Directions for Evaluation and consults with federal agencies on policies in support of quality evaluation methods, including with the U.S. Department of Education and the National Science Foundation. He has chaired and served as Program Chair of the Quantitative Methods TIG for over a decade.

"Much of what I value about AEA is that its members are a self-selected group of people who want to make the world a better place and who believe that better understanding is fundamental to that mission. I am running for the Board now because I am committed to this mission and see this as an important time for AEA. Although AEA is more successful than ever, with a growing membership and a growing demand for quality evaluation in the public, nonprofit, and private sectors, this success brings with it several challenges that I would like to help address as a Board member."

In his ballot statement, George pledged to support the growth of AEA as well as the field and cites his service, writings and professional contributions.

"These challenges for AEA, among others, will be addressed in the years ahead, but how they are addressed will depend largely on the experiences of those engaged in leadership positions. For much of the past two decades I have been leading evaluations of federal and state programs (including evaluations of Medicaid Infrastructure Grants for providing services for persons with disabilities and a recent random assignment experiment of an innovative policy allowing persons with disabilities to increase their earnings without losing all of their Social Security benefits). I also teach graduate courses in program evaluation, for many years at Utah State University and now as Professor of Public and International Affairs at the University of Baltimore. I have contributed to the theory of evaluation by publishing articles in most of the evaluation journals, co-authoring a book on evaluation (with Mel Mark and Gary Henry), and now completing my fourth edited volume of New Directions for Evaluation."

|

EPaul Brandon Appointed Editor-in-Chief of New Directions for Evaluation

| |

Paul R. Brandon has been appointed to serve as Editor-in-Chief of AEA's topical journal, New Directions for Evaluation (NDE). Brandon, a Professor of Education at Curriculum Research & Development Group in the College of Education at the University of Hawai'i at Manoa (UHM) in Honolulu, will serve a three-year term beginning in January 2013. He will succeed Sandra Mathison, who is completing her second three-year term. Paul R. Brandon has been appointed to serve as Editor-in-Chief of AEA's topical journal, New Directions for Evaluation (NDE). Brandon, a Professor of Education at Curriculum Research & Development Group in the College of Education at the University of Hawai'i at Manoa (UHM) in Honolulu, will serve a three-year term beginning in January 2013. He will succeed Sandra Mathison, who is completing her second three-year term.

"When I assume the editorship, NDE will have had 136 issues published over 35 years under the direction of 11 distinguished editors," notes Brandon. "Building on this foundation strikes me as a challenge and an opportunity. The challenge will be (among others, no doubt!) to maintain NDE's high standards while mastering the nuances of serving as Editor-in-Chief overseeing issue editors. The opportunity will be to broaden the list of issue topics in light of AEA's emphasis on diversity while keeping a balance among topics addressing evaluation theory, methods, practice, and the profession. I am honored and humbled by being named NDE Editor-in-Chief and hope to meet the challenges and take advantage of the opportunities fully!"

Brandon brings to the post extensive experience in evaluation - having worked in a non-profit research and evaluation organization; a city employment testing organization; a private K-12 school serving native Hawaiians; and at UHM, where he has served since 1989, often in the role of principal investigator (PI) or co-PI, for university or agency projects funded by local, state, and national entities, most recently for the National Science Foundation and the U. S. Department of Education's Institute of Education Sciences. Brandon also serves as a member of the graduate faculty of his college's Educational Psychology Department, where he directs thesis and dissertation committees and teaches occasional evaluation classes.

With Nick L. Smith, Brandon served as co-editor of the 2008 book, Fundamental Issues in Evaluation, and is serving as the co-editor of the American Journal of Evaluation's Exemplars section. He has won two best-evaluation awards from Division H of the American Educational Research Association (AERA) and in 2011 was given the AERA Research on Evaluation Special Interest Group's first annual Distinguished Scholar Award.

|

Consulting Start-Up and Management: A Guide for Evaluators

| |

AEA member Gail Barrington is author of a new book entitled Consulting Start-Up and Management: A Guide for Evaluators and Applied Researchers, published by SAGE. AEA member Gail Barrington is author of a new book entitled Consulting Start-Up and Management: A Guide for Evaluators and Applied Researchers, published by SAGE.

From the Publisher's Site:

"For almost 20 years, Gail V. Barrington has run popular workshops to help professional researchers determine if they have what it takes to succeed as consultants. This book makes that helpful guidance, and more, available to a wider audience. Barrington shows readers how to: get started, set fees, find work, manage time and money; set up an ownership structure and business systems; manage contracts; and work with sub-contractors and staff. With Barrington at their side to provide advice and encouragement, independent practitioners have the roadmap to success!

"This book is a must-read for all consultants who are considering going out on their own or those who want to fine-tune their current business practice. It is also a key resource for students enrolled in program evaluation, applied research, and management courses and in professional certification programs."

From the Author:

"I've been offering workshops since 1993 and this book provides more information about this exhilarating but challenging career option. It was a luxury to take the time to reflect on my experiences in consulting and to consider some of the important lessons I have learned," says Barrington. "It's a privilege to share my thoughts with others but what I wasn't expecting was that it was a lot of fun as well as a lot of work. As someone once said, 'I wasn't a writer until I wrote a book.' This truism expresses the real learning opportunity that writing a book provides."

About the Author:

Gail V. Barrington is a well-known Canadian program evaluator who has made significant contributions to the profession through her evaluation practice, writing, teaching, training, mentoring and service to the field of evaluation. She founded her consulting firm, Barrington Research Group, Inc. in Calgary, Alberta, Canada in 1985 and has conducted more than 125 program evaluation and applied research studies at the federal, provincial and grassroots levels mainly in the fields of health, education, training, and research. She has been an active member of both AEA and CES since 1985 and has served on a number of committees for these organizations. She was a member of the AEA Board of Directors from 2006-2008, is a member of the editorial board for New Directions in Evaluation and co-edited Issue #111 (2006) on Independent Evaluation Consulting with Dawn Hanson Smart. In 2008 she received the Canadian Evaluation Society award for her contribution to evaluation in Canada.

Go to the Publisher's Site |

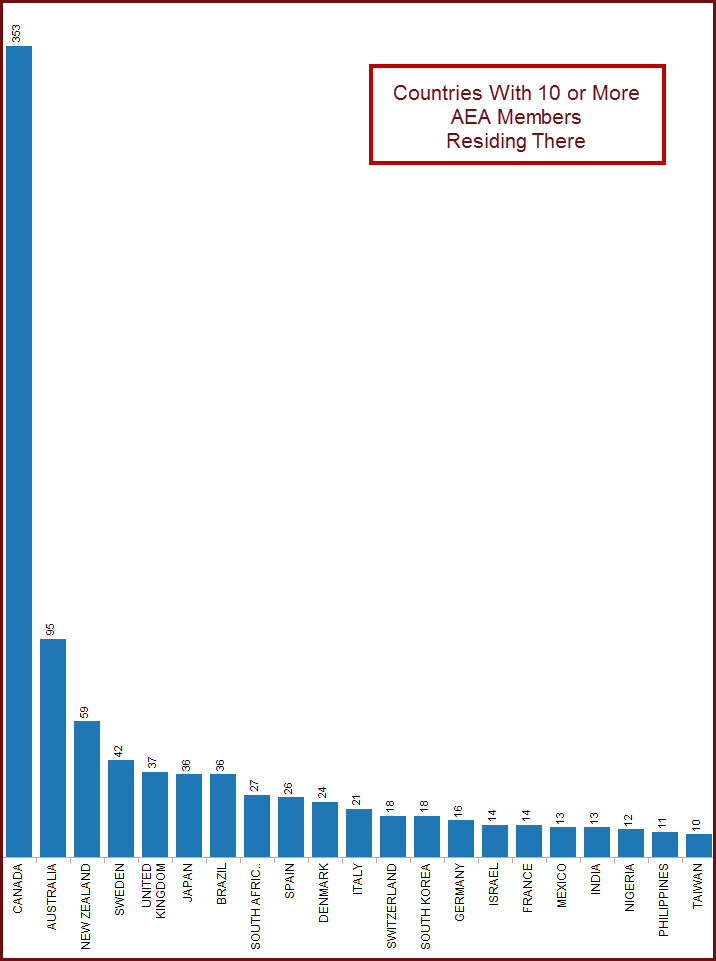

Data Den: AEA Members by Country

| From Susan Kistler, AEA Executive Director

As of today, AEA has over 7,000 members, representing 100 countries. 15% of the AEA membership resides outside of the United States.

|

| Evaluation Fun & Laughter |

Occasionally, we come across evaluation-related humor. Here, a cartoon by AEA member Chris Lysy, who in his day job is a research analyst at Westat. Chris, thanks for sharing your work! Enjoy everyone. If you have art and/or humor you'd like to share, feel free to forward for consideration to our newsletter editor, Gwen Newman, at gwen@eval.org. |

|

New Jobs & RFPs from AEA's Career Center

| |

What's new this month in the AEA Online Career Center? The following positions and Requests for Proposals (RFPs) have been added recently:

- Sr. Performance Evaluation Analyst at I.M. Systems Group Inc. (Silver Spring, MD, USA)

- Program Specialist, Institutional Evaluation at Minnesota Historical Society (St Paul, MN, USA)

- External Evaluator - Internet Initiatives at Internews (Washington, DC, USA)

- Specialist, Research and Evaluation at District of Columbia Public Schools (Washington, DC, USA)

- UNICEF - Launch of New and Emerging Talent Initiative (NETI) at UNICEF (Worldwide)

- Evaluation Manager at Louisiana Public Health Institute (New Orleans, LA, USA)

- Consultant for A Meta Evaluation at Oxfam America (Boston, MA, USA)

- Evaluator at The Evaluation Group (Atlanta, GA, USA)

- Assistant/Associate Research Scientist at The College Board (Newtown, PA, USA)

- Strategic Data Project Fellowship at Center for Education Policy Research at Harvard University (Cambridge, MA, USA)

Descriptions for each of these positions, and many others, are available in AEA's Online Career Center. According to Google analytics, the Career Center received approximately 7,000 unique page views in the past month. It is an outstanding resource for posting your resume or position, or for finding your next employer, contractor or employee. Job hunting? You can also sign up to receive notifications of new position postings via email or RSS feed.

|

| About Us | | The American Evaluation Association is an international professional association of evaluators devoted to the application and exploration of evaluation in all its forms.

The American Evaluation Association's mission is to:

- Improve evaluation practices and methods

- Increase evaluation use

- Promote evaluation as a profession and

- Support the contribution of evaluation to the generation of theory and knowledge about effective human action.

phone: 1-508-748-3326 or 1-888-232-2275

|

|

|

|

|

|

|

|

|