|

|

| Newsletter: September 2012 | Vol 12, Issue 9

|

|

|

|

|

| The Countdown is On |

Dear AEA Colleagues,

We are just 20 days away from Evaluation 2012 and my mind is reeling with anticipation for what lies ahead. Because we will have a special newsletter coming out shortly, focusing specifically on this year's conference, I will keep my remarks here short. But suffice it to say that this event is always the premier event both for the organization and for the presidency and I'm honored to have had the privilege.

There are many who have helped me flesh out this year's theme of Evaluation in Complex Ecologies: Relationships, Responsibilities and Relevance and you'll hear more from them, as well. My thanks to all of them and I hope to see you all of you in Minneapolis as we embrace this annual opportunity to re-connect, share and expand our horizons with fellow members as well as colleagues in the field.

There is, of course, still lots to be done in terms of advance preparations with more than 2,500 attendees, more than 1,000 sessions and 45 pre-conference professional development workshops.

In the meantime, we as an association continue to move forward in new directions as discussed in our last issue. The AEA Board will be poised to share more in the not too distant future about the AMC Executive Director selection/transition, evaluation policy developments, ways to begin developing priorities for the association at the international level, and a host of other initiatives that you'll be hearing about shortly. As always, I invite you to touch base with me personally and I look forward to seeing you soon.

Best,

Rodney

Rodney Hopson

AEA President 2012 |

|

|

|

|

| AEA's Values - Walking the Talk with Beverly Parsons | |

Are you familiar with AEA's values statement? What do these values mean to you in your service to AEA and in your own professional work? Each month, we'll be asking a member of the AEA community to contribute her or his own reflections on the association's values.

AEA's Values Statement

The American Evaluation Association values excellence in evaluation practice, utilization of evaluation findings, and inclusion and diversity in the evaluation community.

i. We value high quality, ethically defensible, culturally responsive evaluation practices that lead to effective and humane organizations and ultimately to the enhancement of the public good.

ii. We value high quality, ethically defensible, culturally responsive evaluation practices that contribute to decision-making processes, program improvement, and policy formulation.

iii. We value a global and international evaluation community and understanding of evaluation practices.

iv. We value the continual development of evaluation professionals and the development of evaluators from under-represented groups.

v. We value inclusiveness and diversity, welcoming members at any point in their career, from any context, and representing a range of thought and approaches.

vi. We value efficient, effective, responsive, transparent, and socially responsible association operations.

I'm Beverly Parsons, executive director of InSites, a nonprofit research, evaluation, and planning organization. Thanks to many of you, I've had the privilege of serving on the AEA Board of Directors from 2009-2011 and was recently elected to serve as 2014 President. I'm Beverly Parsons, executive director of InSites, a nonprofit research, evaluation, and planning organization. Thanks to many of you, I've had the privilege of serving on the AEA Board of Directors from 2009-2011 and was recently elected to serve as 2014 President.

Recently, as I walked into a hotel ballroom, I saw the familiar round banquet tables and an unfamiliar object - at each place setting, a kaleidoscope stood at attention like a toy soldier. Guests aimed their kaleidoscopes at the chandelier. Oohing and aahing at the changing colors and designs, they noticed key words about Strengthening Families tumbling among the colored crystals.

What words from the AEA Values Statement would I put in a kaleidoscope? Enhancement of public good would show up on each turn. Inclusiveness and diversity would be there, too. Viewers would also see high quality, ethical, culturally responsive, global, international, efficient, effective, transparent, and socially responsible. As the AEA values connected and reconnected with the crystals in the kaleidoscope, concepts that sometimes seem disjointed and scattered would begin to form patterns. I would be searching for values linked to three themes: environmental sustainability, social justice, and economic well-being.

In my evaluation work, these themes are becoming the definers of enhancement of public good. In the business world and elsewhere, they are known as the Triple Bottom Line (TBL). I began to focus on the Triple Bottom Line through my participation in the Bainbridge Graduate Institute certification program in Sustainable Business. This past year, the Triple Bottom Line has inspired my evaluation work and my role on the AEA Board.

The Triple Bottom Line encourages organizations to be fully responsible for their actions by establishing measures of their financial, social, and environmental performance. Through rethinking their work, they can account for the systemic impact of their work on the economic, social, and environmental well-being of the communities and populations they serve. I apply systems-oriented evaluation practices and AEA values to help organizations see their opportunities to promote the public good. As O.W. Holmes said, "Every now and then a [person's] mind is stretched by a new idea or sensation, and never shrinks back to its former dimensions." Linking our values, TBL, and evaluation has been such a stretch for me.

This past year, I chaired an exploratory AEA Board task force (with Board members Thomas, Yates, and Cooksy) about our attention to environmental sustainability. To learn more, see the slides I posted in the elibrary from our session at AEA2011.

|

| Policy Watch - Listening Back - Evaluation Lessons from Policy Makers | |

From George Grob, Consultant to the Evaluation Policy Task Force

Five years ago, AEA's brand new Evaluation Policy Task Force (EPTF) contacted President Bush's Office of Management and Budget (OMB) to offer advice on program evaluation. A year later, it began reaching out to incoming senior staff of President Obama's Administration, periodically providing them advice and sending them AEA's Evaluation Roadmap for a More Effective Government. Through this column and other means, we have tried to keep you informed about what the EPTF was telling these officials. Here, I would like to tell you what they have been saying back. Five years ago, AEA's brand new Evaluation Policy Task Force (EPTF) contacted President Bush's Office of Management and Budget (OMB) to offer advice on program evaluation. A year later, it began reaching out to incoming senior staff of President Obama's Administration, periodically providing them advice and sending them AEA's Evaluation Roadmap for a More Effective Government. Through this column and other means, we have tried to keep you informed about what the EPTF was telling these officials. Here, I would like to tell you what they have been saying back.

On October 7, 2009, Peter Orszag, in one of his first acts as Director of OMB, issued a memorandum on "Increased Emphasis on Program Evaluation," in which he called for "rigorous, independent program evaluations" as "a key resource in determining whether government programs are achieving their intended outcomes." He was crystal clear that his focus was on "impact evaluations, or evaluations aimed at determining the causal effects of programs" and determining "the most rigorous study designs appropriate for different programs."

Initial interpretations of this policy were that it was emphasizing the use of random assignment studies. However, it also acknowledged "experimental or quasi-experimental, pre-post implementation, [and] correlation analysis" study designs.

OMB reinforced this policy each year through formal budget guidances, such as its May 18, 2012, Memorandum on the Use of Evidence and Evaluation in the 2014 Budget. OMB has gradually broadened its guidance on analytic methods, encouraging a much wider approach to program assessment, with hints on combining various data sources and analytic methods.

For example, in the Fiscal Year 2013 Analytic Perspectives, Budget of the U.S. Government (page 19), the most recently issued of this annual series, the "Program Evaluation" portion has been renamed "Program Evaluation and Data Analytics." It describes how evaluation, performance measurement, broad statistical data series, and other data can complement one another. For example, it promotes the use of administrative data, survey data, regression analysis, and qualitative data.

Thus, Federal evaluation policy has evolved and will probably continue to do so. The EPTF will do its best to stay on top of and influence this evolution. At the same time, professional evaluators need to hear what Federal evaluation policy directors are saying back. Stay tuned. Another version will be coming soon - after the Presidential election.

Go to the EPTF Web Page |

|

Meet Jean King - Incoming Board Member

| |

In our last issue, we promised a quick introduction of our three incoming Board members as well as the 2014 President. We'll spotlight each individually and thank them for their commitment to service. We begin with Jean King.

Jean King directs the interdisciplinary Minnesota Evaluation Studies Institute (MESI) at the University of Minnesota; collaborates on a regular basis with colleagues from a variety of disciplines (e.g., public health, public policy, Extension); and teaches introductory and advanced evaluation courses. Jean King directs the interdisciplinary Minnesota Evaluation Studies Institute (MESI) at the University of Minnesota; collaborates on a regular basis with colleagues from a variety of disciplines (e.g., public health, public policy, Extension); and teaches introductory and advanced evaluation courses.

In her nomination statement, she noted, "I am running for the Board because I believe the American Evaluation Association - evaluation's premier professional association and my primary professional home - is at a critical juncture and faces the exciting possibility of moving the field toward true professional status in the very near future.

"At this point in its history, I believe our field is poised to take important and, in my opinion, necessary steps toward increased professionalism. In my opinion, it is time for Board-level discussions of credentialing and program accreditation, hallmarks of a profession that evaluation in our country currently lack. My work on the Essential Competencies for Program Evaluators (with Laurie Stevahn, Gail Ghere, and Jane Minnema) has become the basis for professional development initiatives around the world, including the Canadian Evaluation Society's (CES) Professional Designation Program. The CES program has provided the status of Credentialed Evaluator to roughly 100 evaluators in Canada, and American evaluators who join the CES are eligible to apply. Is that how we want colleagues to get their evaluation credentials? With Board leadership, discussion of credentialing and accreditation across our membership may help us further the field's development. I would welcome the opportunity to create ways for members to participate in such discussions and identify productive next steps."

Jean is a familiar face within AEA and has taken active roles for the past 20 years.

- Conference management. "From 1992-1999, prior to AEA's hiring a management firm, I had direct responsibility for helping to organize and run the conference (developing the call, managing the proposal review process, scheduling sessions, preparing the program, on-site management, etc.). I have also served as Local Arrangements Chair at opposite ends of the Mississippi River, in New Orleans, LA in 1988, and again 24 years later in the Twin Cities of Minneapolis/St. Paul, MN this year."

- Editorial contributions. "I have provided service through continuing editorial work and contributions on AEA journals: New Directions for Evaluation (Editorial Board, 2000-present; Editor-in-Chief, 2004-2006), and the American Journal of Evaluation (Editorial Board, 2000-2009; reviewer, 2010-present)."

- Topical Interest Group (TIG) activities. "I have played an active leadership role in four different Topical Interest Groups: Using Evaluations (Co-Chair, 1991-1993); Collaborative, Participatory, and Empowerment Evaluation (Founder/Co-Chair, 1994-1999); Evaluation in Precollegiate Education (Founder/Program Co-Chair, 1999-2001); and Organizational Learning and Evaluation Capacity Building (Founder/Co-Chair, 2006-2009). Through this experience I learned the important role that TIGs play in building AEA's capacity and flexibility."

A huge welcome to Jean and thanks to all who voted and participated in this year's election process!

|

| AEA Announces 2012 Awards Winners - Congrats to All! | |

The American Evaluation Association will honor four individuals and one group at its annual awards luncheon on Friday, October 26, to be held in conjunction with its Evaluation 2012 conference in Minneapolis, Minnesota. Honored this year are recipients in five categories involved with cutting-edge evaluation/research initiatives that have impacted citizens around the world.

"Our AEA awards represent a feather in the cap of a select few of our members annually," notes AEA's 2012 President Rodney Hopson. "This year's awardees are no different. Our colleagues are both deserving and represent the outstanding recognition of theory, practice, and/or service to the field, discipline, and association from our junior members to our senior members both locally and internationally."

We will spotlight each recipient more fully in subsequent issues of this newsletter. If you're at this year's conference, you're invited to join us for the festivities. Join us in congratulating:

- Tarek Azzam, Assistant Professor, Claremont Graduate University

2012 Marcia Guttentag Promising New Evaluator Award

- Katherine A. Dawes, Director, Evaluation Support Division, U.S. Environmental Protection Agency

2012 Alva and Gunnar Myrdal Government Evaluation Award

- Melvin M. Mark, Professor, Pennsylvania State University

2012 Paul F. Lazarsfeld Evaluation Theory Award

- Marco Segone, Senior Evaluation Specialist, UNICEF

2012 Alva and Gunnar Myrdal Evaluation Practice Award

- The Paris Declaration Phase 2 Evaluation Team

2012 Outstanding Evaluation Award

Go to AEA's Awards Page |

| Face of AEA - Meet Kathy McKnight | |

AEA's more than 7,600 members worldwide represent a range of backgrounds, specialties and interest areas. Join us as we profile a different member each month via a short Question and Answer exchange. This month's profile spotlights Kathy McKnight, Director of Research, Center for Educator Effectiveness, PEARSON.

Name, Affiliation: Kathy McKnight, Director of Research, Center for Educator Effectiveness, PEARSON Name, Affiliation: Kathy McKnight, Director of Research, Center for Educator Effectiveness, PEARSON

Degrees: PhD Clinical Psychology, Minor: Program Evaluation & Research Methodology

Years in the Evaluation Field: 18

Joined AEA: 1997

AEA Leadership Includes: Annual Conference Chair 2009-2011; Workshop Task Force Committee lead (reviewing workshops for annual conference)

Why do you belong to AEA?

"To network with and learn from other evaluators; to contribute to the professional development of evaluators; to present ideas and information and get feedback from colleagues; to contribute to the knowledge base, particularly regarding methodology."

Why do you choose to work in the field of evaluation?

"Information garnered from a rigorous program evaluation complements information from other sources and contributes to the development of better programs and policies, ultimately to the benefit of society. This field allows me to contribute to bettering the lives of others in an indirect way: by providing information by which social programs and policies can be improved."

What's the most memorable or meaningful evaluation that you have been a part of - and why?

"As a grad student under the mentorship of Lee Sechrest, I carried out an evaluation of a Mock Crash program sponsored by the Tucson Fire Department and a local high school's chapter of SADD, Students Against Drunk Driving. High school students staged a drunk driving accident on their football field, with the fire department's rescue helicopter and emergency vehicles called to the scene to find injured and dead bodies (played by the students). The entire student body observed from the stadium seats. I loved this project because it involved the students. They helped to determine the intended outcomes of the program, how we would measure that, and how we would measure the immediate impact of the Mock Crash on the audience that day. They came up with a number of ingenious measures including counting seatbelts across the laps of students as they entered and left the school building for several days afterward and at Prom Night. They interviewed students at the scene and took pictures to capture reactions. The best part was helping them synthesize the data and create a scholarly presentation. These high school students presented their evaluation findings to graduate students and faculty at the University of Arizona, and they did a fabulous job! It was a teaching opportunity for me; provided a great partnership opportunity for multiple agencies; and it inspired SADD to work on program improvements and to carry out their own evaluations moving forward, an outcome of which I am particularly proud!"

What advice would you give to those new to the field?

"I don't think enough emphasis is given in our field to the importance of strong research methodology training, which includes study design, data analysis (whether quantitative or qualitative) and most importantly, measurement. Many are prepared with content, but weak on method. Our evaluations are only as good as our methods - if we have the inappropriate tools, we are running around with hammers looking for nails. That is not an effective way to carry out useful program evaluations, and has the strong potential for providing misleading conclusions. Every field has its tools of the trade - research methodology is a critical tool for our trade that should not be downplayed."

If you know someone who represents The Face of AEA, send recommendations to AEA's Communications Director, Gwen Newman, at gwen@eval.org. |

| eLearning Update - Coffee Break Sponsorship | |

From Stephanie Evergreen, AEA's eLearning Initiatives Director

Does your TIG harbor some amazing know-how? Of course it does! So, consider sponsoring a Coffee Break webinar.

If you are a TIG leader, you can reach out to your TIG members and solicit volunteers to present a Coffee Break, seeking content in particular that demonstrates a tool for evaluators.

If you are a TIG member, suggest your tool to your TIG leaders and ask for their sponsorship and support.

Sponsorship is easy. It simply means you:

- Let us list your TIG as a sponsor of the Coffee Break.

- Spread the word about the Coffee Break via your usual conduits to encourage attendance.

Sponsorship lets TIGs contribute resources to the AEA community and raises TIG visibility. Some TIGs have taken sponsorship even further.

The Data Visualization & Reporting TIG put together a page for upcoming webinars - and it also lists past TIG-sponsored webinars with links to the recordings and handouts.

The International & Cross-Cultural TIG also lists its past sponsored webinars, with images from each talk and including the recent four-video series co-sponsored by AEA, Catholic Relief Services, American Red Cross/Red Crescent, the United States Agency for International Development and this TIG.

Though Coffee Break webinars are normally members-only, this was the first open to the public. We saw great results and what's better is that you can view them AND share them! We only ask that you don't edit the recordings, so that credit goes where credit's due.

The four videos all focus on different aspects of Monitoring and Evaluation:

- Monitoring & Evaluation Planning for Projects/Programs with Scott Chaplowe

- Evaluation Jitters Part One: Planning for an Evaluation with Alice Willard

- Evaluation Jitters Part Two: Managing an Evaluation with Alice Willard

- SMILER: Simple Measurement of Indicators & Learning for Evidence-based Reporting with Guy Sharrock and Susan Hahn

Speaking of Coffee Break webinars, in October we'll have Rebecca Stewart demonstrating the Image Grouping Tool, David Shellard showing us Data Visualization with Microsoft Excel, and Lindsey Varner walking us through Qualitative Data Analysis Using R. Register for each Coffee Break here.

Over in our eStudy lineup, October brings Tom Chapel's 8-hour Introduction to Evaluation course. But hurry, registration closes October 2. Later this fall we welcome Scott Chaplowe, talking about Monitoring and Evaluation Planning for Programs/Projects, and Michelle Kobayashi will be back to guide us through Creating Surveys to Measure Performance and Assess Needs. Details and registration are here.

Go to the eStudy Website Page |

| Potent Presentations Quick Tips & Checklist | |

Wow, are your conference presentations ready? Here's where you should focus your attention in the few weeks between now and the conference:

- Practice! At least once per week, in varied locations, and at least twice in front of other people.

- Print final copies of presentation notes.

- Have a physical backup of your notes even if you normally rely on Presenter Mode so you aren't left in a lurch if technology fails you.

- Upload presentation materials to AEA's eLibrary.

- Print 50-100 copies of your handout, if you have one. Alternatively, in an effort to "go green" you can keep your handout in the AEA eLibrary and share the link with your audience.

At the session:

- Arrive at the session early and connect with the other presenters and session chair so that the session may start on time.

- Identify who will be holding the timing cards so that you may watch them during your presentation. Timing cards in each room identify "3 minutes," "1 minute," and "Stop" to prompt presenters and keep sessions on track.

- Deliver your presentation. Speak clearly, maintain eye contact with the audience, and relax. Stick to the agreed upon time for your portion to ensure that everyone has the opportunity to present and interact with the audience. Shine.

- Respond to questions. Be aware of the limited time and offer concise responses, noting when appropriate that you may be able to follow-up post-conference to continue the conversation.

- Depart on time. Leave the room and continue discussion in the foyer so the next session can prepare.

If you are a bit behind, no worries. Download the whole checklist and buzz through the earlier steps.

One more great way to prepare for your presentation is to review the insider knowledge we gleaned from our interviews with the Dynamic Dozen - 12 of the top AEA presenters. The three short reports address presentation messaging, slide design, and delivery. Access them all on our p2i Tools page.

Go to the Potent Presentations Webpage |

| We Want Your Photos! | |

What does the conference theme "Evaluation in Complex Ecologies: Relationships, Responsibilities, Relevance" mean to you? And, how do you see it displayed around you? These two questions are the basis for an interactive and lively discussion for AEA's closing session on Saturday, October 27, 2012 at 4:30 pm.

To gather diverse perspectives on this year's conference theme in a fun and thought-provoking manner, we are inviting all AEA members, both conference and non-conference attendees, to share a photo that reflects this year's conference theme. Each photo submitted should be classified under one of the conference sub-themes of "relationships," "responsibilities," or "relevance" and include a brief explanation (25 words or less) of what the photo represents and why it was chosen. Up to 100 photos will be selected and displayed as part of a slide show video at Saturday's closing townhall session.

Photos submitted may be provocative, funny, unique or thoughtful, but should be in appropriate taste for showing to a mixed gender and multicultural audience. The photo can be taken before or during the conference, as part of an evaluation activity, or simply reflect the complex ecologies in which evaluations take place. Images from the internet are also appropriate. The photo may be taken by the individual submitting it or by someone else. If using someone else's photo, the individual submitting the photo is responsible for ensuring that it has met proper copyright and use permission.

Submission Guidelines and Details:

- Photos should use a standard electronic format (.jpg preferred) and ideally have a higher quality resolution (at least 1280 pixels)

- Explanations should not exceed 25 words

- Submit your photo as an attachment and your explanation in the email body to: AEA2012closingphotos@gmail.com

- Send in your photos between today and Thursday, October 25th at 12 pm (Central Time)

- Only one photo submission per person please!

A Presidential Strand Task Force will review the submitted photos and make selections for the closing session. Early submission is appreciated and prizes will be given to the first five individuals that submit their photos. Remaining prizes for photos will be distributed randomly to those attending the closing session on Saturday.

Questions or inquiries about this process can be sent to Brandi Gilbert and Jenica Huddleston at AEA2012closingphotos@gmail.com. |

| Diversity - NEW Center for Culturally Responsive Evaluation and Assessment | |

From Karen Anderson, AEA's Diversity Coordinator Intern

This month I'm highlighting the new Center for Culturally Responsive Evaluation and Assessment (CREA), led by founding director Stafford Hood, associate director Thomas Schwandt, and a core interdisciplinary team of faculty. Here, Hood provides some added insight via a short Question & Answer exchange. This month I'm highlighting the new Center for Culturally Responsive Evaluation and Assessment (CREA), led by founding director Stafford Hood, associate director Thomas Schwandt, and a core interdisciplinary team of faculty. Here, Hood provides some added insight via a short Question & Answer exchange.

What is the history of CREA? How was CREA developed?

"The Center for Culturally Responsive Evaluation and Assessment (CREA) was established October 2011 in the College of Education at the University of Illinois at Urbana-Champaign. CREA is the result of the evolving work, commitment, and collective vision of a small but growing U.S. and international group from the evaluation and assessment communities over a little more than a decade that are joined by computer scientists similarly committed to a culturally responsive agenda in their work."

What makes CREA uniquely different from other evaluation centers?

"None have a unique focus on the role, impact, and utility of the study of cultural context in educational evaluation, assessment, research, and policy."

Please share major projects/initiatives CREA is currently working on.

"Currently, our major focus is on the CREA Inaugural Conference "Repositioning Culture in Evaluation and Assessment" hosted by the College of Education at the University of Illinois at Urbana-Champaign April 21-23, 2013 Chicago, Illinois. A call for papers and info about the conference can be found here."

Examples of currently ongoing projects:

- New immigrant students in Ireland schools

- Joe O'Hara and Gerry McNamara, CREA-Dublin Office, Dublin City University

- African American Distributive Multiple Learning Styles System (AADMLSS)/City Stroll

- Juan Gilbert, Human Centered Computer Division, Clemson University

- Diversity as an Innovation Resource

- Valerie Taylor, Computer Science and Engineering, Texas A&M University and Executive Director, Center for Minorities and People with Disabilities in Information Technology

What student benefits have you observed? What student benefits have you observed?

"Since CREA has only been established for less than a year, its specific student benefits cannot be surmised at this time. However, based on previous efforts and projects that have informed the development of CREA (i.e., NSF funded Relevance of Culture in Evaluation Institute at Arizona State University directed by Stafford Hood and Melvin Hall) students have benefitted through close interactions with evaluation and assessment scholars, evaluation field experiences in culturally diverse settings, and scholarly collaborations."

What personal and professional benefits have you experienced as a result of leading CREA?

"I do not really see myself as leading CREA but merely serving as the "point person" with Tom Schwandt for the group of us who are committed to this work. I have benefitted personally and professionally from my relationships with those in the CREA family at the University of Illinois and the CREA-affiliated researchers that include colleagues from the U.S., indigenous nations (North America, Hawaii, and Maori of Aotearoa, New Zealand) and Ireland."

If you want to include a culture in evaluation initiative you're involved with please send information to Karen@eval.org. |

| Meet CREATE - An Organization for Educational Evaluators |

AEA is increasingly known for its partnerships with organizations that share mutual goals. Here, we talk with James P. Van Haneghan, an AEA member active with the Consortium for Research on Educational Accountability and Teacher Evaluation (CREATE), who will share more about the organization and its early collaborative work with AEA.

About CREATE

CREATE arose from the work of the Evaluation Center at Western Michigan University. Started as a "Center" led by Daniel Stufflebeam, it became a consortium in 1995. The first National Evaluation Institute (NEI), CREATE's annual conference, was held in 1992. The goal reported by Stufflebeam (1992) was to improve schools through the use of valid, state-of-the-art, evaluation methods. Having been to several NEI meetings and becoming a member of its Board of Directors, I feel that Stufflebeam's vision has been fulfilled. The conference is small, supportive, and always has high quality keynote speakers. I also enjoy the mix of participants in CREATE. Much like AEA, the NEI attracts individuals from higher education, schools, the consulting world, and other organizations. Not surprisingly, there is an overlap in the membership of CREATE and AEA. For example, one of our current board members, Marco Munoz, won the Marcia Guttentag New Evaluator Award in 2001. Currently, CREATE is housed at the University of North Carolina at Wilmington. The 2012 NEI is October 4-6 at the Shoreham Hotel in Washington, DC (go to www.createconference.org for more information). Kati Haycock will be the opening keynote speaker, James Stronge (our 2012 Millman Award winner) will present on teacher evaluation, Andy Porter will speak on the Common Core Standards, and Dan Duke will speak about turnaround schools.

"Like many evaluators," says Van Haneghan, Professor and Director of Assessment and Evaluation in the College of Education at the University of South Alabama, "I started working on evaluations largely because I knew a bit about research methods, measurement, and statistics. I was trained as an applied developmental psychologist with interests in cognitive development and education. As I gained more experience as an evaluator, I realized that I needed to connect to the evaluation community. As a starting point, I participated in the Evaluation Center at Western Michigan University's Summer Evaluation Institute. Along with the opportunity to learn from some of the leaders in evaluation, I left with a variety of resources and the hope of building connections with the professional community. AEA was one organization that I joined, and I have benefited greatly from my AEA membership. In addition to AEA, the Evaluation Center pointed me toward CREATE. "Like many evaluators," says Van Haneghan, Professor and Director of Assessment and Evaluation in the College of Education at the University of South Alabama, "I started working on evaluations largely because I knew a bit about research methods, measurement, and statistics. I was trained as an applied developmental psychologist with interests in cognitive development and education. As I gained more experience as an evaluator, I realized that I needed to connect to the evaluation community. As a starting point, I participated in the Evaluation Center at Western Michigan University's Summer Evaluation Institute. Along with the opportunity to learn from some of the leaders in evaluation, I left with a variety of resources and the hope of building connections with the professional community. AEA was one organization that I joined, and I have benefited greatly from my AEA membership. In addition to AEA, the Evaluation Center pointed me toward CREATE.

"I view my participation in CREATE as complementary to my AEA membership. Educational evaluation has always been important in AEA. The PreK-12 Educational Evaluation TIG has in the past been the largest TIG, and many of the founders of AEA were educational evaluators. AEA provides me with the broad scope of evaluation and a vision of the evaluation profession. CREATE provides me with a smaller, more focused set of experiences surrounding the practical elements of accountability and evaluation that pervade educational settings. It also provides great networking opportunities due to the organizational size and the commonality of interest among its members."

Stay tuned for more developments in our ongoing collaborative efforts with CREATE.

Go to the CREATE Website |

| In Memoriam - Richard Duncan | |

Richard Duncan, one of the founders of AEA's International and Cross-Cultural Evaluation TIG, passed away earlier this month at the age of 90.

Recalls AEA member Alexey Kuzmin, who credits Duncan with helping him become more engaged: "When I joined AEA in 1999, Richard contacted me at the conference and did exactly what I needed at that point in time: he helped me get connected with colleagues interested in international evaluation. Since then we regularly met at the AEA conferences and had series of great conversations. Richard was a very experienced and wise person."

Indeed. Richard will be missed by many. |

| Rawlsian Political Analysis: Rethinking the Microfoundations of Social Science |

AEA member Paul Clements is author of a new book published by Notre Dame Press titled Rawlsian Political Analysis: Rethinking the Microfoundations of Social Science. AEA member Paul Clements is author of a new book published by Notre Dame Press titled Rawlsian Political Analysis: Rethinking the Microfoundations of Social Science.

From the Publisher's Site:

"In Rawlsian Political Analysis: Rethinking the Microfoundations of Social Science, Paul Clements develops a new, morally grounded model of political and social analysis as a critique of and improvement on both neoclassical economics and rational choice theory. What if practical reason is based not only on interests and ideas of the good, as these theories have it, but also on principles and sentiments of right? The answer, Clements argues, requires a radical reorientation of social science from the idea of interests to the idea of social justice.

"The most significant challenge to utilitarianism in the last half century is found in John Rawls's A Theory of Justice and Political Liberalism, in which Rawls builds on Kant's concept of practical reason. Clements extends Rawls's moral theory and his critique of utilitarianism by arguing for social analysis based on the Kantian and Rawlsian model of choice. To illustrate the explanatory power of his model, he presents three detailed case studies: a program analysis of the Grameen Bank of Bangladesh, a political economy analysis of the causes of poverty in the Indian state of Bihar, and a problem-based analysis of the ethics and politics of climate change. He concludes by exploring the broad implications of social analysis grounded in a concept of social justice."

From the Author:

"When I took 'EC 10: Introduction to Economics' in my freshman year at Harvard I found it disconcerting to learn that economic analysis is based on the assumption of rational utility maximization. As a Peace Corps Volunteer and development worker in The Gambia I found the World Bank/IMF structural adjustment programs (grounded in economic theory) disconcerting, and when I did my graduate work in Public Policy at Princeton these concerns were deepened. Also, I learned that rational choice theory, then on the ascendance in political science, is based on the same limited model of how people make decisions. I had taken a class with John Rawls when I was a college sophomore, and I read his A Theory of Justice from cover to cover while I was a Peace Corps Volunteer. In 1997, the year after I received my Ph.D. and my first year as an assistant professor, I read Rawls's Political Liberalism where he lays out his model of choice consisting of two "moral powers", the rational (like in economics and rational choice theory) but also the reasonable (the sense of justice, right, and fairness). It seemed apparent that if there is a different model of choice there must be a different social analysis based on it. This is what the book offers."

About the Author:

Paul Clements is a professor of political science at Western Michigan University. He also directs the Masters of International Development Administration program and is a faculty member of the Interdisciplinary Doctoral Program in Evaluation. He received his Ph.D. in Public Affairs from the Woodrow Wilson School at Princeton University.

Go to the Publisher's Site |

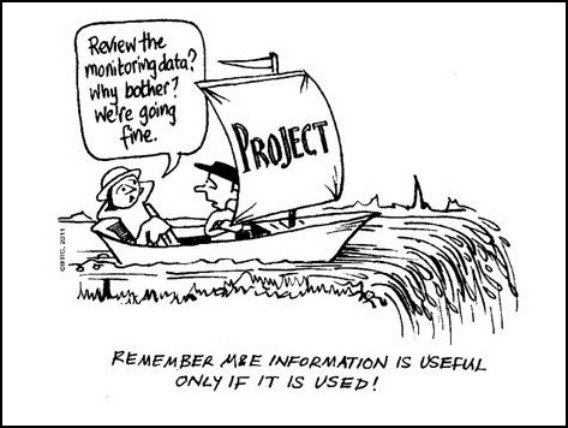

| Evaluation Humor | |

Could you use a little laughter today? Check out the cartoon below. You can find more in the IFRC Project and Program M&E Guide. Thanks to Schott Chaplowe, who mentioned it on the aea365 blog.

If you have an illustration or graphic you think will bring a chuckle, feel free to forward it to Newsletter Editor Gwen Newman at gwen@eval.org. |

New Member Referrals & Kudos - You Are the Heart and Soul of AEA!

| | As of January 1, 2012, we began asking as part of the AEA new member application how each person heard about the association. It's no surprise that the most frequently offered response is from friends or colleagues. You, our wonderful members, are the heart and soul of AEA and we can't thank you enough for spreading the word.

Thank you to those whose actions encouraged others to join AEA in August. The following people were listed explicitly on new member application forms:

Anita Baker * Sally Bond * Victoria Carlan * Sharon Chontos * Galen Ellis * Robert Fischer * Vincenzo Fucilli * Jerome Gallagher * Christie Getman * Efrain Gutierrez * Barbara Howard * James McDavid * Timothy McLaughlin * Kathleen Kelsey * Jean King * Melanie Moore * Clare Nolan * Rachel Oelmann * Shannon Orr * Peggy Parskey * Michael Quinn Patton * Mary Ramlow * Lillie Ris * Lorilee Sandmann * Diana Seybold * Wei Song * Jim Van Haneghan |

|

New Jobs & RFPs from AEA's Career Center | |

What's new this month in the AEA Online Career Center? The following positions have been added recently:

- Coordinator of Student Affairs Assessment and Research at Rutgers, The State University of New Jersey (New Brunswick, NJ, USA)

- Research Analyst II at First 5 LA (Los Angeles, CA, USA)

- Senior Evaluation Specialist at Asian Development Bank (Manila, PHILIPPINES)

- Associate Researcher at Center for Urban Initiatives and Research, UW-Milwaukee (Milwaukee, WI, USA)

- RFP LeV: Getting to the Heart of Jewish Education at Jewish Learning Venture (Philadelphia, PA, USA)

- Evaluation Specialist II at Public Health Institute's Network for a Healthy California (Sacramento, CA, USA)

- Senior Research Associate at Montclair State University - Center for Research and Evaluation on Education and Human Services (Montclair, NJ, USA)

- Associate Vice President, Assessment & Evaluation at Ashford University (San Diego, CA, USA)

- Research Associate at Prime Time Palm Beach County (Boynton Beach, FL, USA)

- Deputy Director of Research and Evaluation at Corporation for National and Community Service (Washington, DC, USA)

Descriptions for each of these positions, and many others, are available in AEA's Online Career Center. According to Google analytics, the Career Center received approximately 4,000 unique visitors over the last 30 days. Job hunting? The Career Center is an outstanding resource for posting your resume or position, or for finding your next employer, contractor or employee. You can also sign up to receive notifications of new position postings via email or RSS feed.

|

| About Us | | The American Evaluation Association is an international professional association of evaluators devoted to the application and exploration of evaluation in all its forms.

The American Evaluation Association's mission is to:

- Improve evaluation practices and methods

- Increase evaluation use

- Promote evaluation as a profession and

- Support the contribution of evaluation to the generation of theory and knowledge about effective human action.

phone: 1-508-748-3326 or 1-888-232-2275

|

|

|

|

|

|

|

|

|