|

|

| Newsletter: July 2012 | Vol 12, Issue 7

|

|

|

|

|

|

Conference Keynotes Announced |

Happy July colleagues,

The conference is coming! The conference is coming!

By now, you have received responses to your proposal submissions to present at the upcoming AEA conference (Evaluation in Complex Ecologies: Relationships, Responsibilities, Relevance to be held 22-27 October in Minneapolis, MN) and you are making travel plans to attend. Please look out for periodic email announcements from our courteous and competent staff, search for the details of your conference presentations, and check the conference website for helpful hints leading up to the event.

Two plenary speakers are confirmed and are looking forward to addressing themes that relate to the conference topic:

- Linda T. Smith (Ngāti Awa, Ngāti Porou) has a professional background in Māori and indigenous education. Professor Smith currently serves as Pro Vice-Chancellor Māori at the University of Waikato and has served on a number of national advisory committees including the Tertiary Education Advisory Committee (TEAC) and the Māori Tertiary Reference Group for the Ministry of Education in New Zealand. Her plenary address serves as a call to decolonize research and evaluation approaches "so that new understandings can be developed that work and that enhance ethical and methodological practices more broadly." Professor Smith will share indigenous experiences, perspectives, values, and approaches that build a larger understanding of the complex ecologies at work in programs, policies, and projects in which we carry out evaluations.

- Oran Hesterman is President and CEO of Fair Food Network, a national nonprofit that works at the intersection of food systems, sustainability and social equity to guarantee access to healthy, fresh and sustainably grown food, especially in underserved communities. Recently featured on CNN Chief Medical Correspondent Dr. Sanjay Gupta's show, Hesterman is a national leader in sustainable agriculture and food systems. Dr. Hesterman's plenary will reflect on a recent cluster evaluation and provide examples of how addressing changes in complex systems can challenge evaluation thinking and practice.

Stay tuned for more information regarding conference opening and closing sessions, the presidential strand sessions, and more highlights featured in Minneapolis.

Kudos to the Environmental Evaluation Unconference (that's right, un-conference) recently held, 18-19 July, at American University in Washington DC. Led by a planning team chaired by Matt Keene at the Environmental Protection Agency, the 7th annual network forum sponsored by the Environmental Evaluators Network addressed questions such as "What are the responsibilities and opportunities for environmental evaluation to serve the public good?" and "What facilitates and impedes the discipline of evaluation and its practitioners in achieving environmental 'public goodness'?"

In addition to the unique style of the unconference where attendees drive the meeting and build peer learning, creativity and collaboration, the day 2 opening presentation by Dr. Shelley Metzenbaum, Associate Director for Performance and Personnel Management, Office of Management and Budget (OMB), highlighted implications of the (May 18, 2012) OMB Memorandum, M-12-14, "Use of Evidence and Evaluation in the 2014 Budget" and other initiatives of the Obama administration to strengthen the use of evidence to inform federal programs and policies. Also at the unconference and building off of one of our Board and one of our Association-wide priorities established for FY2013, I facilitated a small session related to how AEA could support evaluators in environmental responsibility and consciousness. Ideas generated included i) encouraging more partnerships and forums to stimulate interchange on the topic, ii) including affiliates in discussions about the topic, iii) influencing evaluation policy in the environmental sector, iv) providing access to training, tools, and guidance in environmentally-friendly ways, and more! If you have more ideas on expanding our networks in matters that relate to the environment or in other complex ecologies of our work, please share with me at president@eval.org.

With best wishes to you and yours this month!

Rodney

Rodney Hopson

AEA President 2012 |

|

|

|

|

| AEA's Values - Walking the Talk with Stewart Donaldson | |

Are you familiar with AEA's values statement? What do these values mean to you in your service to AEA and in your own professional work? Each month, we'll be asking a member of the AEA community to contribute her or his own reflections on the association's values.

AEA's Values Statement

The American Evaluation Association values excellence in evaluation practice, utilization of evaluation findings, and inclusion and diversity in the evaluation community.

i. We value high quality, ethically defensible, culturally responsive evaluation practices that lead to effective and humane organizations and ultimately to the enhancement of the public good.

ii. We value high quality, ethically defensible, culturally responsive evaluation practices that contribute to decision-making processes, program improvement, and policy formulation.

iii. We value a global and international evaluation community and understanding of evaluation practices.

iv. We value the continual development of evaluation professionals and the development of evaluators from under-represented groups.

v. We value inclusiveness and diversity, welcoming members at any point in their career, from any context, and representing a range of thought and approaches.

vi. We value efficient, effective, responsive, transparent, and socially responsible association operations.

I'm Stewart Donaldson, Dean and Professor of Psychology at Claremont Graduate University. I have had a wonderful experience with my colleagues at Claremont during the past 15 years developing and implementing Ph.D., master's, certificate, and professional development programs in evaluation. I'm honored to be currently serving my third year on the AEA Board, and to be completing my first year as co-chair of AEA's Graduate Education Diversity Initiative (GEDI) program. I'm Stewart Donaldson, Dean and Professor of Psychology at Claremont Graduate University. I have had a wonderful experience with my colleagues at Claremont during the past 15 years developing and implementing Ph.D., master's, certificate, and professional development programs in evaluation. I'm honored to be currently serving my third year on the AEA Board, and to be completing my first year as co-chair of AEA's Graduate Education Diversity Initiative (GEDI) program.

I am proud I was on the board when AEA's new values statement was developed and adopted. This statement promises to guide our association and members as we face and take on controversial issues and evaluation challenges in the months and years ahead. I am especially pleased that we have made it explicit for future leaders in AEA that they should strive to be responsive to all of our diverse members, and to lead our organization in a transparent and socially responsible manner. In the future, I'm optimistic that AEA will be known for its values of inclusiveness and diversity and for welcoming evaluators from all backgrounds and points of view to engage the key issues of the day.

Another dimension of my service to the evaluation profession has been supervising more than 50 Ph.D. students, teaching hundreds of evaluation master's and certificate students, and providing trainings for thousands of professionals. I have tried to inspire these often enthusiastic participants to reach for the stars, to think deeply about their ethics, social responsibilities, and awareness of cultural diversity and competency, as well as to work hard to develop cutting-edge evaluation knowledge and technical skills. This past year has been particularly rewarding as I have lived my values of inspiring and educating new evaluators from traditionally underrepresented groups in the U.S. through the GEDI program, as well as improved understanding of good evaluation practices in the international evaluation community through my collaborative capacity development projects with the Rockefeller Foundation, UNICEF, UN Women, and other international partners.

Finally, the AEA values stated above have challenged me to think more critically about the evaluations I conduct, as well as the evaluations I supervise for my students. At the end of the day, these values make us aware that it is our responsibility in this profession to ensure that our evaluations are rigorous and of the highest quality possible taking into account the context. Our work should lead to better evidence-based decision making, help with policy formulation, promote social betterment and justice, and/or improve programs, organizations, communities, and developing societies across the globe. What a wonderful set of values and profession to be part of! |

| Diversity - Reshaping Minority-Serving Institutions (MSI) Program Initiatives | |

From Karen Anderson, AEA's Diversity Coordinator Intern

AEA's Minority-Serving Institutions (MSI) Program is a collaborative devoted to building faculty capacity in evaluation and broadening participation in the field. The MSI Program was initiated by AEA in 2005, and has included over 50 faculty from Historically Black Colleges and Universities (HBCUs), Hispanic Serving Institutions (HSIs), and Tribal Institutions. AEA's Minority-Serving Institutions (MSI) Program is a collaborative devoted to building faculty capacity in evaluation and broadening participation in the field. The MSI Program was initiated by AEA in 2005, and has included over 50 faculty from Historically Black Colleges and Universities (HBCUs), Hispanic Serving Institutions (HSIs), and Tribal Institutions.

We are excited that this year's cohort of MSI Program alumni is already hard at work, reshaping the program through the development of a new program model proposal. The group is being led by Art Hernandez, Professor and Dean of the College of Education at Texas A&M University and Kevin Favor, Professor and Past Chair of the Department of Psychology at Lincoln University.

Reflecting on his own MSI experience, Hernandez states, "As a member of the second cohort, among many positives, I was very pleased to have the opportunity to experience the various professional development activities that AEA had to offer and to have access to the best evaluation thinkers and practitioners alive. I expect and sincerely hope that however the MSI program evolves, the availability of the many leaders in AEA to provide mentorship, training and collegial support continues."

A minor in program evaluation was introduced at Lincoln University in 2010, and Hernandez is currently routing a proposal for a Master's degree and certification program in Program Evaluation at Texas A&M University. He is expecting to accept the first cohort of students beginning in the Fall of 2013.

Looking into the future, Hernandez notes, "I expect the next iteration of the MSI program will provide, in addition to participant benefits, an opportunity for AEA to be advantaged by diverse thinking and practice and supported in its efforts to educate various constituencies about evaluation, promote effective evaluation practice and theory development and, most especially, provide new avenues for professionals and academics from diverse backgrounds to acquire evaluation skill and engage in the study and practice of evaluation for the benefit of all."

Current cohort member and MSI Program alumna Sylvia Ramirez Benatti states that "the goal for my participation in the alumni cohort is to provide our future participants with a clear and meaningful experience that enhances their own personal development and, I think the most important part, providing them with the knowledge and skills to teach the next generation of students."

A huge thank you to this group for their commitment to strengthening the MSI Program and a warm welcome to this year's cohort!

Jose Prado, Cal State University, Dominguez Hills

Sylvia Ramirez Benatti, University of the District of Columbia

Dawn Frank, Oglala Lakota College

Leona Johnson, Hampton University

Luisa Guillemard, University of Puerto Rico, Mayaguez Campus |

| eLearning Update - The Best Laid Plans | |

From Stephanie Evergreen, AEA's eLearning Initiatives Director

eLearning is a fickle field. If you've attended more than a handful of our Coffee Break webinars, you probably understand what I mean. Most of the time, everything moves along swimmingly. But more often than we'd like, something goes awry. For an occasional attendee, the viewing screen never displays the presenter's slides, for reasons none of us can explain. Once in a while, the presenter trips up when navigating among various open programs. As I write this, just last week the audio line completely snipped off. On those rare Coffee Breaks where something has hit a snag, we do our best to recover and learn from our mishaps. Here's what we do to take preventative care:

- We rehearse. About two weeks prior to each Coffee Break webinar, we hold a 45-minute rehearsal, where we discuss logistics, check on audio quality, practice handing the screen back and forth, and walk through each step of the presentation.

- We peer coach. All presenters are offered the option to invite a peer coach onto the rehearsal. Our awesome peer coaches - Nina Potter, Joe Heimlich, John Nash, and Juan Paulo Ramirez - give presentation pointers and insights about how to run the demonstration smoothly.

Even still, nerves and technology do not always cooperate with us when we go live. We're lucky enough to have very poised and graceful presenters who can easily roll through small hangups and give an effective demonstration. When things really go haywire, we have a backup plan:

- We stop.

- We cut off the webinar, apologize deeply, and schedule another time to rerecord the demonstration with no audience.

- We then notify everyone who registered when the recording is available. With more apologies.

Technology is never perfect but we are grateful for an avenue to host amazing, volunteer presenters as they share their know-how with our members.

And speaking of Coffee Break Demonstrations, this month we've hosted a series on Monitoring and Evaluation. In August, Cindy Banyai will present on data collection in an Asian context and Kylie Hutchinson will talk about evaluation reporting tips. See the upcoming schedule here.

In our eStudy series, August will bring 3 hours of Kylie Hutchinson's professional development on Effective Reporting Techniques. In September, Kim Fredericks will return to talk about Social Network Analysis for Beginners and Jennifer Catrambone will be back to present on Nonparametric Statistics. Oh, and did you know we added a course on Tableau? Details and schedule here.

Go to the eStudy Website Page

|

| Potent Presentations Quick Tips & Checklist | |

Conference session notices went out in early July. Have you started preparing for your session? In August, here's what you should consider knocking off your list:

Based on your key content points, develop visual aids, like slides. Each room is equipped with a traditional transparency projector for plastic transparencies, an LCD projector, a computer and a screen. Consider the time available and the multiple learning styles of attendees (auditory, visual, etc.) to create a valuable presentation. Based on your key content points, develop visual aids, like slides. Each room is equipped with a traditional transparency projector for plastic transparencies, an LCD projector, a computer and a screen. Consider the time available and the multiple learning styles of attendees (auditory, visual, etc.) to create a valuable presentation.

- Refer to the Potent Presentation Slide Design Guidelines as you develop your slides.

- Embed the font into your file so that it will appear the same when it is emailed to your chair or when you plug your flash drive into the room's laptop.

- Avoid acronyms, jargon, and abbreviations in your visual aids and handouts. Past evaluations have clearly indicated that this frustration, in particular for new and international attendees, makes a presentation difficult to comprehend.

- Prepare one slide that you can put up at the beginning and end of the presentation with your presentation title, name, and contact information. In case you do not have enough handouts, encourage attendees to write down this information for follow-up.

You can find the Potent Presentation Slide Design Guidelines and a complete Presentation Preparation Checklist at the Potent Presentation webpage, here.

If you missed the training webinars on how to prepare for and deliver an Ignite session, catch up by viewing the recording. |

| American Journal of Evaluation (AJE) Rises in Journal Citations Rankings |

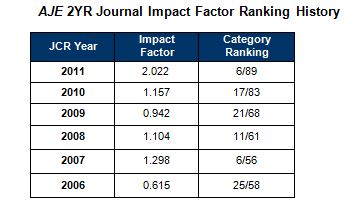

In Thomson Reuters Journal Citation Reports' annual ranking of academic journals, the American Journal of Evaluation (AJE) rose from 17th to 6th in its shared category with 89 interdisciplinary/social sciences journals - resulting in its highest ranking and most impressive gain since 2007 when it competed against one-third fewer publications overall. AJE's articles were cited a total of 606 times last year, both in AJE and in approximately 100 other publications.

Each year, Thomson Reuters indexes roughly 10,100 journals from more than 2,600 publishers from 84 countries, divides them into subject categories and calculates each journal's "impact factor." A journal's impact factor is the average number of times that journal articles in the Thomson Reuters database published in one year cite articles published in the journal in the preceding two years. In the social sciences category, anything over 1.000 is considered high-impact. AJE's impact factor rose from 1.157 last year to 2.022 for 2011.

AJE Editor-in-Chief Thomas Schwandt and its publisher, SAGE Publications, have consciously worked to leverage a high impact factor. AJE Editor-in-Chief Thomas Schwandt and its publisher, SAGE Publications, have consciously worked to leverage a high impact factor.

"More AJE articles were cited overall and at higher rates than in the past," notes Leah Fargotstein, SAGE Social Science Journals Editor. "Since so many articles were cited, it means that authors are likely reading AJE, finding the research valuable, and building on this research to make their own contributions to the field."

Adds Schwandt: "An article might be cited for any number of reasons, but typically only when it attains a high level of new findings, quality scholarship, or controversy that generates intellectual interest or "buzz" within a given field. Therefore, the primary strategy for boosting impact factor is to attract and publish only the highest-quality content available. It is important to remember that only citations to an article during the two to five calendar years following its publication have an effect on a journal's impact factor. Therefore, many of the strategies involve actions that are time-sensitive."

What are AJE's Most Cited Articles for 2011?

- Research on Evaluation Use: A Review of the Emperical Literature from 1986 to 2005

- Toward Accurate Measurement of Participation: Rethinking the Conceptualization and Operationalization of Participatory Evaluation

- Unpacking Black Boxes: Mechanisms and Theory Building in Evaluation

- Contemporary Thinking about Causation in Evaluation: A Dialogue with Tom Cook and Michael Scriven

And don't forget, AEA members have 24-hour access not only to current content but to more than 20 years of AJE archives. Interested in publishing?

At the Evaluation 2012 conference in Minneapolis, AEA's journal editors will lead a workshop providing guidance for authors interested in publishing in AJE and New Directions for Evaluation (NDE). The session (Panel Session 563) includes AJE Editor Thomas Schwandt as well as NDE's Editor Sandra Mathison and incoming editor Paul Brandon and will be held from 1:45 PM to 2:30 PM on Friday, October 26 immediately following AEA's awards luncheon. Mark your calendars now - this is a popular session that attracts a crowd every year. Join Tom and Paul as they discuss the journals, the submission and review process, and keys for publishing success.

Go to AJE's Website Page |

| August Thought Leaders Discussion with New NDE Editor Paul Brandon |

Mark your calendars and join us August 26-September 1 as incoming editor for New Directions for Evaluation (NDE) Paul Brandon leads the Thought Leaders Discussion Series in August. Mark your calendars and join us August 26-September 1 as incoming editor for New Directions for Evaluation (NDE) Paul Brandon leads the Thought Leaders Discussion Series in August.

Brandon is Professor of Education at Curriculum Research & Development Group (CRDG), College of Education, University of Hawaii at Manoa (UHM). During and after completing his PhD in educational psychology at UHM in 1983, he has done assessment and educational programevaluation and research in a variety of settings, including for a non-profitevaluation firm, for the City and County of Honolulu civil service assessmentoffice, for a wealthy educational institution serving native Hawaiian P-20 studentsand the community, and, since 1989, on the UHM faculty.

With Nick L. Smith, Brandon was co-editor of the 2008 book, Fundamental Issues in Evaluation, and is the current co-editor of the American Journal of Evaluation Exemplars section. He has won two national best-evaluation awards from Division H of the American Educational Research Association (AERA) and in 2011 was designated as a distinguished scholar by the AERA Research on Evaluation Special Interest Group. He succeeds Sandra Mathison as Editor-in-Chief of New Directions for Evaluation in 2013 after spending 2012 learning the journal policies and procedures and helping prepare 2013 issues.

|

| Face of AEA - Meet Tamara Walser, University of North Carolina Wilmington | |

AEA's more than 7,000 members worldwide represent a range of backgrounds, specialties and interest areas. Join us as we profile a different member each month via a short Question and Answer exchange. This month's profile spotlights Tamara Walser, an AEA member, conference proposal reviewer and webinar presenter.

Name, Affiliation: Tamara M. Walser, Director of Assessment and Evaluation for the Watson College of Education and Associate Professor and faculty member in the Educational Leadership Department at the University of North Carolina Wilmington.

Degrees: Ph.D. Research and Evaluation; M.S. Instructional Design and Development; B.A. French

Years in the Evaluation Field: 18

Joined AEA: 1998

AEA Leadership Includes: Leadership Council Member, Organizational Learning and Evaluation Capacity Building TIG, 2009-2011; Conference Proposal Reviewer, 2011, 2009, 2008, 2006; Coffee Break Webinar Presenter: Developing Evaluation Reports that Are Useful, User-Friendly, and Used.

Why do you belong to AEA?

"AEA is my professional home. I've attended every conference since I joined in 1998 and I keep in touch throughout the year through the website, newsletter, blog posts, etc. AEA keeps me current and connected to the field. One of the great benefits of belonging to AEA is the opportunity to network with other evaluators who work across disciplines. I've collaborated on projects with evaluators I've met in person and virtually through AEA. It's not a professional organization, it's a professional community."

Why do you choose to work in the field of evaluation?

"Evaluation is a process that makes meaningful change. It's about inquiry, creativity, and learning. Evaluation design, implementation, and sharing require new ways of thinking, problem-solving, collaborating, and communicating. No two evaluations are ever the same. As an evaluator, I get to facilitate this change process. I also get to turn others onto evaluation and its benefits through teaching, mentoring, and involving clients and other stakeholders in evaluation. I'm very fortunate."

What's the most memorable or meaningful evaluation that you have been a part of and why?

"Several years ago, I led the evaluation of the National Assessment of Educational Progress (NAEP) State Service Center, a center that provides training and support to NAEP State Coordinators across the country. This evaluation stands out for two related reasons. First, throughout the evaluation process, I collaborated with an evaluation work group of key personnel responsible for the different activities of the Center - and that collaboration was powerful! Second, I saw results and recommendations from ongoing formative and annual evaluation used immediately to make the Center better."

What advice would you give those new to the field?

"First, join AEA! It's the best way to stay current and network with others in the field. Second, continually develop your soft skills when working with evaluation clients and other stakeholders, including:

- Persistence: It's your job to keep the evaluation effort on the radar and moving forward.

- Patience: Evaluation-related tasks are not always someone's top priority today, but they may be tomorrow or the next day.

- Sympathy: Showing an understanding of people's busy and complex lives is necessary and appreciated.

- Flexibility: You will not always get your evaluation way. Find compromises that will result in quality evaluation that works for all involved.

- Graciousness: Appreciate, respect, and give thanks to those involved in the evaluation."

If you know someone who represents The Face of AEA, send recommendations to AEA's Communications Director, Gwen Newman, at gwen@eval.org. |

| Have You Registered for Evaluation 2012? |

It's still three months away but registration has officially opened for Evaluation 2012, this year in Minneapolis, MN. Join us October 22-27 as more than 2000 convene in a city known for its commitment to progress, world-class cultural attractions, and a vibrant community of evaluators waiting to welcome you.

Our professional development workshops, as well as Wednesday sessions, will be held at the Minneapolis Hilton. On Thursday, Friday, and Saturday, we will move to the nearby Minneapolis Convention Center. Sessions at the conference explore this year's theme, Evaluation in Complex Ecologies: Relationships, Responsibilities, Relevance, as well as the full spectrum of topics representing the breadth and depth of the field of evaluation. We welcome attendees from around the world, at any point in their career, and working in any context. Go to the Evaluation 2012 Website Page to view the program, learn more about this year's theme, and to learn more about presentation types, professional development workshops and travel and discounted hotel options. Go to the Evaluation 2012 Website Page |

| Congrats to Recent Evaluation Graduates! |

AEA would like to congratulate and recognize recent evaluation grads receiving their long-awaited and much-anticipated degrees. What can we say other than simply, YAY!! Some are names you know already as they already are active in the field. Those we know who completed their studies between January and June 2012 include:

- Stephanie Evergreen, Western Michigan University

- Maxine Gilling, Western Michigan University

- June Gothberg, Western Michigan University

- Michael Harnar, Claremont Graduate University

- Maria Jimenez, University of Illinois at Urbana-Champaign

What are they up to next?

"I am splitting my time between data communication consulting (and blogging and writing a book for Sage on the topic), directing eLearning and Potent Presentations Initiatives for AEA, and evaluating at The Evaluation Center at Western Michigan University," says Stephanie. "The AEA annual conference was a major launching point for me, in giving me a platform to talk about my passions and connect with others who were headed in the same direction."

June plans to continue her work as a full-time researcher for WMU on a U.S. Department of Education grant and, in the future, to seek a faculty position in the areas of evaluation, research, or statistics. She recalls that early in her academic program, she was encouraged to submit a proposal to AEA and that at her first presentation, she found the organization "especially student friendly with members reaching out to assist."

June adds:  "Within the first 24 hours, I was invited to several TIG business meetings and social events. These connections led to greater involvement in the association and to leadership opportunities as a student conference volunteer, a reviewer, a session chair, an ambassador, and a contributor to the Diversity section and Cultural Competence committee." June currently is co-chair of the Disabilities and Other Vulnerable Populations TIG, webmaster for the Mixed Methods Evaluation TIG, and an AEA intern working as the Lead Curator for aea365. "Within the first 24 hours, I was invited to several TIG business meetings and social events. These connections led to greater involvement in the association and to leadership opportunities as a student conference volunteer, a reviewer, a session chair, an ambassador, and a contributor to the Diversity section and Cultural Competence committee." June currently is co-chair of the Disabilities and Other Vulnerable Populations TIG, webmaster for the Mixed Methods Evaluation TIG, and an AEA intern working as the Lead Curator for aea365.

When asked to describe how AEA has impacted her academically and professionally, June stated:

"AEA has given me the opportunity to build my capacity as a professional evaluator. Many AEA members guided, encouraged, and supported me academically. I am especially grateful to Susan Kistler for her determination to promote student involvement in the association, thus creating a culture of learning and growing opportunities I have not found at this level in other professional associations. I plan to be a lifer with AEA."

Congrats to all recent graduates.

If you know of others to add to this list, please email us at office@eval.org. We'll be sure to recognize them in upcoming issues. |

| Evaluation: Seeking Truth or Power? |

AEA members Pearl Eliadis, Jan-Eric Furubo and Steve Jacob are editors of Evaluation: Seeking Truth or Power?, a new book released by Transaction Publishers. AEA members Pearl Eliadis, Jan-Eric Furubo and Steve Jacob are editors of Evaluation: Seeking Truth or Power?, a new book released by Transaction Publishers.

From the Publisher's Site:

"Evaluation has come of age. Today most social and political observers would have difficulty imagining a society where evaluation is not a fixture of daily life, from individual programs to local authorities to parliamentary committees. While university researchers, grant makers and public servants may think there are too many types of evaluation, rankings and reviews, evaluation is nonetheless viewed positively by the public. It is perceived as a tool for improvement and evaluators are seen as dedicated to using their knowledge for the benefit of society."

"The book examines the degree to which evaluators seek power for their own interests. This perspective is based on a simple assumption: If you are in possession of an asset that can give you power, why not use it for your own interests? Can we really trust evaluation to be a force for the good? To what degree can we talk about self-interest in evaluation, and is this self-interest something that contradicts other interests such as "the benefit of society?" Such questions and others are addressed in this brilliant, innovative, international collection of pioneering contributions."

From the Editors:

"The trigger of this book was the fact that evaluation itself has become a very important ingredient in public life. Extensive resources are spent on evaluation. So, it is time to see evaluation as a powerful player in its own right. The book results from a collective reflection of the members of the International Evaluation Research Group (INTEVAL) animated by Ray C. Rist. It's a comparative endeavour that involves 13 authors from eight countries in North America and Europe with different academic backgrounds. By compiling national and international experiences, this book shows that the issue of power in evaluation resonates globally and highlights cases where power relationships play a decisive role in the evaluation process from the beginning (e.g. definition of the mandate, drafting of requests for proposals) to end (e.g. use and misuse of evaluation results)."

About the Editors:

Pearl Eliadis is a human rights lawyer, author and lecturer, and principal of her Montreal law firm. Trained at McGill and Oxford universities, she is admitted to the Quebec and Ontario Bars and teaches Civil Liberties at McGill's Law Faculty.

Jan-Eric Furubo has published many works on evaluation methodology, the role of evaluation in democratic decision making processes and its relation to budgeting and auditing.

Steve Jacob has been a professor in the Department of Political Science at Laval University since 2004. He was the director of the Master in Public Affairs program (2006-2010) and president of the Interfaculty Research Ethics Committee (2006-2010). He is the director of PerƒEval, the research laboratory on public policy performance and evaluation at Laval University.

Go to the Publisher's Site |

| Have You Cast Your Vote? | |

The 2012 election is underway for AEA President and three Board Members-at-Large. Voting ends Friday, August 3. To view this year's ballot and cast your vote, click here. It takes just minutes and makes a huge difference in the future of the organization. If you haven't logged in already, you'll need your username and password, shown below. Thanks in advance!

Username:

Password: |

| Evaluation Fun - Musical Memories of Evaluation 2011 & Other Eval Ditties | |

It's summertime, so let's have fun! Did you know there's a jingle on aea365 about evaluation, complete with video? It was a spirited rendition led by Mel Mark, AEA's 2006 President and infamous evaluation lyricist. The song was sung to the tune of American Pie by Don McLean at the closing session of AEA's annual conference in Anaheim. You can see it here along with Impact Blues by Terry Smutylo and another ditty by colleagues from New Zealand. Know of another? Forward it on Newsletter Editor Gwen Newman at gwen@eval.org.

A long, long time ago...

I can still remember

Group assignment used to make me smile.

And I knew that with point oh-5 chance

That I could find sig- nif- i- cance

And, maybe, better programs for a while......

For all the lyrics, click here. |

New Member Referrals & Kudos - You Are the Heart and Soul of AEA!

| | As of January 1, 2012, we began asking as part of the AEA new member application how each person heard about the association. It's no surprise that the most frequently offered response is from friends or colleagues. You, our wonderful members, are the heart and soul of AEA and we can't thank you enough for spreading the word.

Thank you to those whose actions encouraged others to join AEA in June. The following people were listed explicitly on new member application forms:

Liya Aklilu * Emily Bourcier * Katrina Brewsaugh * Sally Brown * Valentine Cadieux * Matt Keene * Jean King * Rene Lavinghouze * Barbara MkNelly * Maihan Nijat * Noorullah Noori * Gabrielle O'Malley * Geri Peak * Tricia Piechowski * Rebekah Rhodes * Liliana Rodriguez-Campos * Robert Stake * |

|

New Jobs & RFPs from AEA's Career Center | |

What's new this month in the AEA Online Career Center? The following positions have been added recently:

- Program Evaluation and Research Manager at Minnesota Office of Higher Education (St. Paul, MN, USA)

- Analyst, New Diagnostics at The Clinton Health Access Initiative (New Delhi, Delhi, INDIA)

- Teacher Incentive Fund External Evaluation at Kansas City, Missouri School District (Kansas City, MO, USA)

- Call for Expressions of Interest; Evaluation of UNICEF's (Emergency) Predaredness Systems at UNICEF (New York, NY, USA)

- Sr Research Scientists (Workforce Development) at Social Dynamics LLC (Gaithersburg, MD, USA)

- Assessment and Educational Research Associate at University Corporation at CSU Monterey Bay (Seaside, CA, USA)

- Research Assistant, Labor Management at 1199SEIU Family of Funds (New York, NY, USA)

- Assessment Consultant/Trained CLASS Observer at Right to Play (New York, NY, USA)

- Research Assistant at The Findings Group LLC (Decatur, GA, USA)

- Results Programme Manager at TradeMark East Africa (Nairobi, KENYA)

Descriptions for each of these positions, and many others, are available in AEA's Online Career Center. According to Google analytics, the Career Center received approximately 3,800 unique visitors over the last 30 days. Job hunting? The Career Center is an outstanding resource for posting your resume or position, or for finding your next employer, contractor or employee. You can also sign up to receive notifications of new position postings via email or RSS feed.

|

| About Us | | The American Evaluation Association is an international professional association of evaluators devoted to the application and exploration of evaluation in all its forms.

The American Evaluation Association's mission is to:

- Improve evaluation practices and methods

- Increase evaluation use

- Promote evaluation as a profession and

- Support the contribution of evaluation to the generation of theory and knowledge about effective human action.

phone: 1-508-748-3326 or 1-888-232-2275

|

|

|

|

|

|

|

|

|