|

|

| Newsletter: May 2012 | Vol 12, Issue 5

|

|

|

|

|

|

Relationships, Responsibilities, and Relevance |

Colleagues in AEA,

In the month of May, I was invited to attend the Eastern Evaluation Research Society (EERS) and the Canadian Evaluation Society (CES) conferences held in Absecon, New Jersey and Halifax, Nova Scotia, respectively. The theme of EERS was Evaluation in a Complex World: Balancing Theory and Practice and the theme of CES was Valuing Difference, both relevant themes for evaluation (in complex) ecologies of the 2nd decade of the 21st century. More than ever, whether we are in New Jersey, Nova Scotia, Northern Cape (South Africa), or Nesodden (Norway), we evaluators continue to pay attention to the relationships, responsibilities, and relevance of our work.

In response to an EERS keynote presentation on the relationship between theory and practice from Jennifer Greene, Nick Smith reminded us not to rush to balance theory and practice but that we understand both evaluation theory and evaluation practice better. As I took from his comment, each deserve more methodological, practical, and reflective understanding. Martha McGuire's CES presentation in a best practices panel presentation on logic models in recognizing diversity provided alternate visual representations of these graphic conceptual models more contextually and culturally responsive to indigenous and "visible minority" Canadian populations.

On the second day of the CES conference, I made a trip to what was formerly known as Africville, a community of African Nova-Scotians (resettled, former enslaved African-Americans and other black people of the African diaspora) that lived within the city limits of Halifax from as early as the 1830s. In the 1960s, the families were uprooted and homes demolished in the name of urban renewal and integration. What lies now as a peaceful dog park and green space on the north end of the city are a few momentos to remind residents and tourists what once was: a memorial at the entrance of the park and replica construction of the Seaview African United Baptist Church, including a trailer that sits likely as protest to the relocation events of nearly 50 years ago.

In searching even further during my brief stay in scenic and memorable Halifax, I found an evaluation report (that's right!) in the archives of the Black Cultural Centre of Nova Scotia written by Donald Clairmont (Dalhousie University) and Dennis Magill (University of Toronto) in 1971. It documents the stories of the residents of Africville whose homes and lands were expropriated. According to one website dedicated to the report, the Dalhousie Institute of Public Affairs report serves as a seminal primary source document for those interested in "local and Canadian history, African Canadian studies, law, sociology, social work, municipal politics and public administration, urban planning, and environmental racism." Sponsored by the Nova Scotia Department of Public Welfare, in association with the Department of National Health and Welfare, the report was prepared to address an underlying concern related to the relocation success or failure from the relocatee's point of view. Among the findings: nearly two-thirds of relocated respondents claimed that the relocation produced a personal crisis. In addition, the relocation resulted in significant challenges pertaining to household composition. A third of respondents reported illness related to relocation pressure, and nearly half had lived in two homes within a few years of relocation from Africville.

Today, the spirit of Africville lives on from an apology by the Mayor Peter Kelly in 2010 and an agreement between the Halifax Regional Municipality and the Africville Genealogy Society regarding a number of issues that were settled as a result of the continued relocation dispute. Among these terms also included land, renaming and maintenance of the park, community development, and $3 million contribution. This spirit lives on arguably because an evaluation report understood the relevance of relocation and renewal in the context of social betterment in North America and these social science evaluators took responsibility for ensuring the report would have more far-reaching use and value than it was likely expected to have.

In the spirit of memories and memorials and ways evaluation can play its part in ensuring meaningful relationships are at the core of what we do,

Rodney

Rodney Hopson

AEA President 2012

|

|

|

|

|

AEA's Values - Walking the Talk with Sandra Mathison, NDE Editor

| |

Are you familiar with AEA's values statement? What do these values mean to you in your service to AEA and in your own professional work? Each month, we'll be asking a member of the AEA community to contribute her or his own reflections on the association's values.

AEA's Values Statement

The American Evaluation Association values excellence in evaluation practice, utilization of evaluation findings, and inclusion and diversity in the evaluation community.

i. We value high quality, ethically defensible, culturally responsive evaluation practices that lead to effective and humane organizations and ultimately to the enhancement of the public good.

ii. We value high quality, ethically defensible, culturally responsive evaluation practices that contribute to decision-making processes, program improvement, and policy formulation.

iii. We value a global and international evaluation community and understanding of evaluation practices.

iv. We value the continual development of evaluation professionals and the development of evaluators from under-represented groups.

v. We value inclusiveness and diversity, welcoming members at any point in their career, from any context, and representing a range of thought and approaches.

vi. We value efficient, effective, responsive, transparent, and socially responsible association operations.

I am a Professor of Education at the University of British Columbia where I continue my lifelong engagement in learning and teaching about evaluation. I am also currently Editor-in-Chief of New Directions for Evaluation, have served on various AEA committees, and have been a member of the AEA Board of Directors.

As evaluation theory and practice have taken root and spread around the globe it is exciting to see AEA adopting a Values Statement that reflects the importance of our intellectual and moral obligations as an organization and a profession. As Editor-in-Chief of one of AEA's journals, I see these values as critical signposts for fostering the involvement, at many levels, of diverse perspectives. These values are important in how I do my work, which includes assembling a team of associate editors and editorial board members that has a global reach and reflects the cultural diversity that AEA's values encourage. These values are also important in shaping the content of the journal as I have worked to include different points of view about evaluation; the perspectives of experienced and novice evaluators; and perspectives of evaluators from all parts of the world.

AEA's Values Statement is also an important anchor for me individually, in doing evaluation as well as teaching about evaluation. From the beginning of my career until the present I have been guided by AEA's values. I strive to foster inclusion through participatory approaches, practice in ethically defensible ways through transparency and thoughtfulness, and to do evaluation in the service of both clients and the greater good.

I contribute to AEA's value of continual development of evaluators through my teaching and mentoring of graduate students as they learn the craft of evaluation. I am aware at all times of not simply transmitting knowledge and skills to the next generations of evaluators, but also the importance of imbuing novice evaluators with the foundational values on which AEA stands. As a professional organization, AEA provides useful guidance and reminders that evaluation is much more than a technical practice; it is also a moral and values-laden one. In my teaching I am eager for students to see themselves as ethically engaged, open to and engaged with many forms of diversity, and to see their future work as evaluators as meaningful and useful in building a better world. |

| Policy Watch - Balancing Evaluation Theory and Practice |

From Eleanor Chelimsky, Evaluation Policy Task Force Member

One of the most difficult tasks for AEA's Evaluation Policy Task Force is to present a more-or-less united position as we speak to government agencies about evaluation policy. We need to represent AEA, with all its remarkable diversity and freedom of opinion, and therefore include AEA's divisions as well as its agreements in our policy approaches. One of the most difficult tasks for AEA's Evaluation Policy Task Force is to present a more-or-less united position as we speak to government agencies about evaluation policy. We need to represent AEA, with all its remarkable diversity and freedom of opinion, and therefore include AEA's divisions as well as its agreements in our policy approaches.

A recent suggestion that AEA develop a set of "best practices" for agency and evaluator use may provide a way to confront one of our most difficult and important divisions: that between theory and practice. A good relationship between the two has been hard to achieve in most fields, and that has certainly been the case for us. Evaluation theory and practice appear to function in different universes, with theory concentrating on principles and methods, and practice on the real world of people, priorities, politics and power. But because evaluation theory is the foundation of evaluation practice, "best practices" can't really be developed without a careful, systematic look at how well we've integrated theory and practice, at the problems we've encountered in doing that, at the solutions brought by practitioners to those problems, and at the generalizability of those solutions.

Theory and practice are interdependent: each one learns from the other and, in that learning process, both are inspired to stretch, to bend a little, to grow. Further, that interdependence endows both with legitimacy: theory protects practice from singularity and anecdotalism, while practice protects theory from abstraction. We need them both.

Now might be a good time for AEA to revisit the question of how we balance theory and practice in our work, how we increase the amount of "new knowledge that can be empirically generalizable at the same time that it is relevant to specific real-life contexts." (Fischer,1991)

Four measures taken by AEA could help us here:

(1) A specialized, annual forum at AEA to present often-encountered practitioner experience that challenges theory in some implicit or explicit way.

(2) The collection of brief, informal reports by evaluators, documenting problematic practitioner encounters with theory. This would enable the development over time of a database of on-going problems, and also enable the generalizations necessary for "best practice." A panel of experienced evaluators would choose among these for presentation at the AEA forum (above).

(3) Set up a blog, listserv, or Google+ hangout. This would allow on-going conversation and advice to keep us current, dynamic and innovative vis-à-vis thought/practice interactions.

(4) An annual debate at AEA on a specific theory/practice balance issue: for example, how do we define "replication" for scale-up purposes? How do we strengthen the external validity of a randomized controlled trial?

By engaging in these four activities, we at AEA might be able to get to a place in our work where theory and practice support each other better. This could improve the quality of evaluative information by increasing its credibility and usefulness. But it would also strengthen the evaluation process itself, create an appropriate foundation for developing "best practices," and set up conditions, through on-going conversations and debate, for the future growth and development of our field.

Go to the Evaluation Policy Task Force Page |

| Diversity - Cultural Competence Statement Turns One & Look Who's Talking! |

From Karen Anderson, AEA's Diversity Coordinator Intern

Guess what's turning one! In April 2011, the AEA general membership approved the AEA Public Statement on Cultural Competence in Evaluation. The Statement was born out of a recommendation by the Building Diversity Initiative (BDI), a joint effort of AEA and the W.K. Kellogg Foundation to "engage in a public education campaign to emphasize the importance of cultural context and diversity in evaluation for evaluation-seeking institutions." One year later, the work is being carried on by the AEA Public Statement on Cultural Competence in Evaluation Dissemination Working Group, tasked with making the Statement accessible to facilitate dialogue about cultural competence in evaluation.

Where are they talking?

Last month I attended a professional development session hosted by the Atlanta-Area Evaluation Association, Taking a Stance Towards Culture: Cultural Competence in Evaluation, which explicitly built upon the Statement's guidance. Dara Schlueter of ICF International addressed issues for novice evaluators, stressing that although many of the challenges of culturally competent evaluation at the community level are not specific to new evaluators, it is especially important for new evaluators to be aware of challenges they may face (and potential solutions). According to Schlueter, "Trying to be culturally competent in different settings, it's a journey and not something that you arrive at one day. We should always try to understand the communities we are working with. This is important for new evaluators to learn so we can embed this in the work that we do."

Ashani Johnson-Turbes, senior manager at ICF International, provided a list of tips for developing culturally competent programs and evaluations. They included:

- Specify your target audience(s) and culture(s)

- Conduct background research to better understand audience cultures, beliefs and behaviors

- Understand the nuances of subcultures, language and even dialect within your target audience(s)

- Engage target audience(s) early and often as you develop program concepts, messages, and materials, as well as ways to disseminate the program, and evaluation tools

- Pay attention to (health) literacy as you create program messages, materials, and evaluation plans

- Incorporate appropriate images of the cultural audience

- Collaborate with community partners/leaders/gatekeepers to implement and evaluate programs

Johnson-Turbes also suggested developing strategic partnerships, community workshops and events, and media activities as strategies to build "sustainable relationship with the community members."

The AEA Public Statement on Cultural Competence in Evaluation has come a long way - from identifying a need through the Building Diversity Initiative (BDI) and the AEA Diversity Committee, up to its development by the AEA Public Statement on Cultural Competence Task Force, to today's Dissemination Working Group! The Statement is generating discussion and reflection as evaluators strive not only to consider it in practice, but internalize its lesssons as a part of practice. Thanks to all who contributed to its development and to those working today to increase dissemination and use. Happy Birthday Cultural Competence Statement!

If you would like to share your story or comments about how you're using AEA's Public Statement on Cultural Competence in Evaluation, please email me at karen@eval.org. |

| eLearning Update - eStudy Attracts Non-Conference Attendees | |

From Stephanie Evergreen, AEA's eLearning Initiatives Director

One justification often used for offering online professional development is that it makes learning available to people who otherwise wouldn't be able to travel. Indeed, this justification was part of our thinking in the launch of the Professional Development eStudy program. We offer 3- or 6-hour online courses on a range of evaluation topics. Our online presenters are typically the same experts featured in our face-to-face workshops at the annual conference.

As evaluators, you know we can't resist the urge to figure out whether our initial assumptions about the benefits of online learning were accurate. Is our online Professional Development eStudy program attracting people who otherwise don't attend the annual conference? How many of the people who attend our eStudy courses haven't attended the conference in the last two years? 71%. That's right - 71%.

At the time of this writing, 620 unique people have registered for at least one of our eStudy courses. Just 180 of those people registered for the conference in either 2010 or 2011. That leaves 440 unique individuals - 71% - who are now accessing evaluation professional development through AEA. We know that some of those attracted to the eStudy cannot attend the annual conference simply due to logistical barriers like geography or economy. We also know that some of our eStudy registrants had not even heard of AEA prior to getting wind of a particular webinar that interested them. Whatever the reason they hadn't been part of our audience before, the lovely appeal of online professional development is that it does, indeed, extend our ability to make learning about evaluation accessible.

So what's ahead on the eStudy schedule? In June, we'll feature Intermediate Developmental Evaluation with Michael Quinn Patton (but hurry - registration closes June 4) and Introductory Consulting Skills with Gail Barrington (registration closes June 8). In July we're excited to bring in Michelle Revels, who will discuss Focus Groups. In August, Kylie Hutchinson will talk about Effective Reporting Techniques. And in September and October, we'll host Beginner and Intermediate Social Network Analysis (respectively) with Kimberly Fredericks. Details and registration are available by clicking the link below.

Go to the eStudy Website Page |

| Light Your Ignite Training Webinar - Register Now! |

Conference notices don't go out until July 3, but we have already opened registration for two online training webinars around Ignite sessions. Ignite sessions are a new addition to the set of session types presented at the 2012 AEA annual conference. Just 5 minutes in length, Ignite sessions are very short and thus the presenter must be prepared and the presentation must be structured. In a 30 minute training webinar, held twice, AEA's eLearning Initiatives Director Stephanie Evergreen will describe the preparation, logistics, development, and delivery of an Ignite presentation. The training will include a demonstration of a full Ignite presentation and Conference notices don't go out until July 3, but we have already opened registration for two online training webinars around Ignite sessions. Ignite sessions are a new addition to the set of session types presented at the 2012 AEA annual conference. Just 5 minutes in length, Ignite sessions are very short and thus the presenter must be prepared and the presentation must be structured. In a 30 minute training webinar, held twice, AEA's eLearning Initiatives Director Stephanie Evergreen will describe the preparation, logistics, development, and delivery of an Ignite presentation. The training will include a demonstration of a full Ignite presentation and

leave ample time for audience questions.

Register for Tuesday July 17 11-11:30 am ET or

Register for Thursday July 26 4-4:30pm ET

Can't make either? The webinar will be recorded and posted to AEA's eLibrary for future access.

This training is part of the Potent Presentations Initiative - an ongoing training series on how to message, design, and deliver conference presentations. |

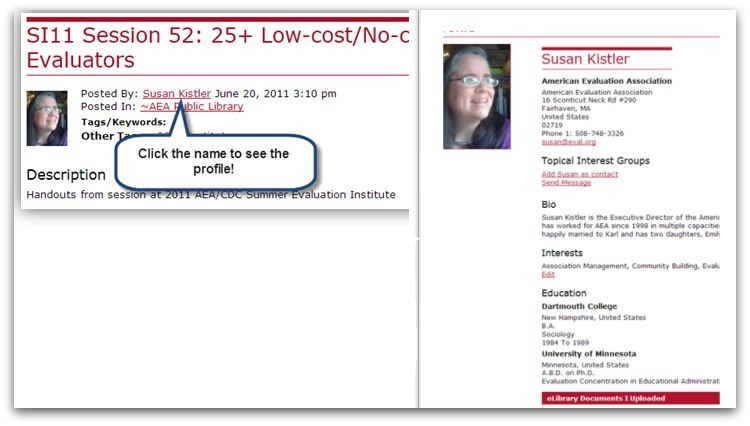

| Using AEA's eLibrary to Expand Your Network |

Hello all! My name is Kate Golden and I am excited to be one of AEA's newest staff members. My role focuses on sustaining the evaluation community through connecting people and resources. As such, I spend much of my time exploring AEA's vast array of resources, from our journals, to AEA 365 Tip-a-Day posts, to our CoffeeBreak Webinar archives, looking for opportunities to create connections that will enhance our community of practice. Hello all! My name is Kate Golden and I am excited to be one of AEA's newest staff members. My role focuses on sustaining the evaluation community through connecting people and resources. As such, I spend much of my time exploring AEA's vast array of resources, from our journals, to AEA 365 Tip-a-Day posts, to our CoffeeBreak Webinar archives, looking for opportunities to create connections that will enhance our community of practice.

Believe me when I say there is a LOT of information out there.

One of our most extensive resources is our eLibrary which holds, among other materials, slides, papers, posters and reports shared at past AEA Evaluation conferences. Not only is it a great resource, but it offers an opportunity to expand your professional network!

If you are perusing eLibrary posts, looking for a new or exciting tidbit of information, take a look at the profile of the individual who posted to learn more about that individual's interests, affiliations, and other contributions to the eLibrary.

It's a great opportunity to expand your professional network and gain some insight from someone who has expertise on a topic of interest. Because our members are diverse in background, experience, relationship to evaluation, and even geographic location, you may find someone of interest whom you might not cross paths with otherwise.

For those of you who are members and have not yet taken the opportunity, consider taking a minute of your time to fill in your profile so that others can find you and take advantage of your knowledge and expertise. To update your profile:

- Go to the AEA eLibrary.

- Sign in by clicking on the "View members only content" link in the upper right hand corner and entering your username and password when prompted.

- Click on "My Profile" in the menu under the page's header OR "Profile" in the little box in the upper right that shows you are logged in.

- Under each section of your profile you'll see an option to edit it - fill it out to tell others more about your bio, interests, education, and job history.

If you run into challenges, I can help! Send me an email at kate@eval.org. |

Face of AEA - Meet Cindy Crusto, Cultural Competence Cheerleader

| AEA's more than 7,000 members worldwide represent a range of backgrounds, specialties and interest areas. Join us as we profile a different member each month via a short Question and Answer exchange. This month's profile spotlights Cindy Crusto, an AEA member for more than a decade and a champion who's helped spearhead the development of AEA's Cultural Competence in Evaluation Statement.

Name, Affiliation: Cindy A. Crusto, Ph.D., Yale University School of Medicine, Department of Psychiatry Name, Affiliation: Cindy A. Crusto, Ph.D., Yale University School of Medicine, Department of Psychiatry

Degrees: Ph.D., clinical-community psychology, University of South Carolina

Years in the Evaluation Field: 21 years

Joined AEA: 2000

AEA Leadership Includes:

2011-present, Chair, Public Statement on Cultural Competence in Evaluation, Dissemination Working Group

2008-2011, Chair, Cultural Competence in Evaluation Task Force, Diversity Committee

2006-2008, Member, Cultural Competence in Evaluation Task Force, Diversity Committee

2005-2006, Member, Cultural Reading Task Force on the Evaluation Standards, Diversity Committee

Why do you belong to AEA?

"At my first AEA conference in 1996, I felt AEA was a great professional home. In 2000, I applied for and was awarded a MIE student travel award. My mentor was Hazel Symonette, and again, I felt that AEA presented wonderful learning and mentoring opportunities. I've been fortunate to have AEA members help me become involved over the past 12 years."

Why do you choose to work in the field of evaluation?

"Program evaluation is one activity that supports my professional training in clinical-community psychology. I view program evaluation as a form of action research in which evaluation (or field research) is conducted to address real-world issues/challenges. Evaluation capacity-building is a way of giving psychology and skills away so that organizations and communities can better monitor their outcomes and address their needs. Program evaluation also is a way for me to meaningfully contribute to bettering social programs, particularly for children and underserved populations and communities."

What's the most memorable or meaningful evaluation that you have been a part of - and why?

"There are several, but my first two program evaluations stick out. My first project was required for a program evaluation course taught by James Cook in my M.A. program in clinical-community psychology at UNC-Charlotte. I coordinated and evaluated the Neighborhood Assistance Project, a pilot effort funded by the United Way of Central Carolinas and the Foundation for the Carolinas. The project sought to take leadership development into three low-income neighborhoods for the purpose of strengthening community associations and their problem-solving capabilities. The project sought to strengthen neighborhood organizations, and each organization/community was required to address a neighborhood challenge. I learned to conduct and evaluate trainings, conduct needs assessments, engage and partner with community residents and association leaders, and navigate the politics of working in neighborhoods. This project set the stage for my future work in developmental, participatory, and empowerment evaluation.

"In my doctoral program (mid-to-late 90s) at the University of South Carolina, I worked with Abe Wandersman and Robert Goodman evaluating several CSAP-funded substance abuse prevention coalitions in SC. This was an amazing time because Abe, David Fetterman, and others were developing empowerment evaluation and our research team contributed to empowerment evaluation's ideas and concepts. I learned about partnerships with stakeholders; the integration of program development, implementation, and evaluation; CQI processes; the importance of knowledge transfer between the science and the practice of prevention and how evaluators can be integral to that process; how to build agencies' evaluation capacity, and how to be a "critical friend."

What advice would you give to those new to the field?

"Obtain as much practical experience as you can, particularly in different national and international settings."

If you know someone who represents The Face of AEA, send recommendations to AEA's Communications Director, Gwen Newman, at gwen@eval.org. |

| Communicating with Clients - How Do You? | |

How do you communicate with your clients? AEA likes to spotlight samples of great client and stakeholder communications. Here we connect with Rakesh Mohan with Idaho's Office of Performance Evaluations, who shares more about his department's outreach to constituents via newsletters.

"When I started as the director of the Office of Performance Evaluations at the end of 2002, OPE did not have a newsletter. We issued our first newsletter in July 2003. We believe a newsletter is an excellent way to communicate the office's vision, goals, efforts, and accomplishments with all kinds of stakeholders including the legislature, our primary audience for whom we work. Our newsletter allows us to show our accomplishments as well as share more about ongoing and upcoming work. I also use the newsletter as an education tool. Under the section "From the Director," I have discussed various concepts and issues such as: independence, objectivity, responsiveness, credibility, accountability, good government, excellence, and performance measurement."

The OPE newsletter typically contains four pages of content and is usually sent twice a year to an audience that includes:

- Legislators

- Senate and House staff

- Legislative staff (budget, financial audit, bill drafting and research, IT, etc.)

- Governor, special assistants and staff (financial management, budget administrator, etc.)

- State Controller and division chiefs

- Attorney General and division chiefs

- State agency directors

- Local university professors

- Consultants

- Media

- National colleagues

Generally, each issue includes the following features:

- From the Director

- Recently Completed Reports

- Upcoming Evaluation and Follow-up Reports

- National Recognitions

- Performance Measurement

"Some of the feedback we get indicates a better appreciation for the kind of work we do, a better understanding of state initiatives and their end results, and a better informed constituency as well as the public."

"All of our newsletters are available here. We've also recently connected with followers through Twitter, Facebook, and Linkedin."

To share samples of the ways that you interact with your audiences, email AEA's Communications Director, Gwen Newman, at gwen@eval.org. We'd love to share the ways you communicate via this column as well as through AEA's online eLibrary. Thanks! |

| Statistics Without Borders - Pro Bono Initiatives Undertaken Internationally | |

Recently, thanks to AEA member June Gothberg, representatives from AEA and Statistics Without Borders (SWB) met to discuss potential collaborative efforts. Notes June, "I am passionate about both AEA and SWB and saw possibilities of working together for the betterment of both groups - and for the international programs served by SWB. SWB is doing some amazing work that really makes a difference." The meeting was a success and now we'd like to introduce you to SWB. Recently, thanks to AEA member June Gothberg, representatives from AEA and Statistics Without Borders (SWB) met to discuss potential collaborative efforts. Notes June, "I am passionate about both AEA and SWB and saw possibilities of working together for the betterment of both groups - and for the international programs served by SWB. SWB is doing some amazing work that really makes a difference." The meeting was a success and now we'd like to introduce you to SWB.

SWB is an apolitical all-volunteer outreach group of the American Statistical Association. The group was formed in 2008 to provide pro bono statistical support to organizations involved in international health issues. Although the group started out small, it has quickly grown to involve over 500 volunteer statisticians around the world who provide pro bono consultancy activities. Note that we define "health" very broadly.

The mission of SWB is to achieve better statistical practices in areas such as:

- research and evaluation design

- using or exploring existing data

- statistical analysis

- survey design

- sampling

- developing data gathering instruments

- developing scales and indicators

- developing in-house statistical capabilities

- using statistical software

The above commitment helps to insure international health projects and initiatives are delivered more effectively and efficiently. SWB seeks opportunities to provide statistical assistance in situations without adequate statistical support, or where volunteer expertise may exceed what is available to the non-profit group or government agency.

Examples of recent projects include:

- Earthquake assistance in Haiti. SWB partnered with SciMetrika to assist on a cell phone survey to assess the economic impact of the earthquake. The goals were to estimate the employment status of the Haitian population and the change in that status, and to estimate aspects of the current housing situation. Our work was featured on National Public Radio: http://media.theworld.org/audio/060120109.mp3.

- Millennium Villages Project in Rural Africa. In collaboration with Columbia University's Earth Institute, SWB is working with Millennium Villages on a cluster randomized study of health and development interventions in 14 African countries involving 60,000 households.

- Sierra Leone: SWB is working on a long-term project with UNICEF to evaluate health interventions in Sierra Leone. SWB assisted with the design of the baseline survey, data cleaning, and survey weighting. Ongoing work includes design and data analysis for a post-intervention survey.

- Evaluation of the Rotary Oceania Medical Aid for Children. The evaluation committee is quantitatively assessing this program, in particular looking at the psycho-social well-being of the child and their caregivers.

- Developing a Clear Strategy for the Use of Statistical Software in Developing Nations. A major obstacle restricting adequate statistical analyses to be conducted by local statisticians within developing nations is limited access to sophisticated statistical software.

Getting involved:

SWB is seeking volunteers, as well as organizations and projects in need of assistance with statistical issues on non-profit health-related work. For more information about SWB, if you have a potential project, or if you would like to volunteer, you can visit their website, Facebook page or email g.shapiro4@verizon.net. |

| Evaluation Roots: A Wider Perspective of Theorists' Views and Influences | |

AEA member Marvin Alkin is editor of Evaluation Roots: A Wider Perspective of Theorists' Views and Influences, a new book by SAGE with an interesting twist all its own - all royalties have been generously assigned to AEA. Thank you Marv for your thoughtfulness! AEA member Marvin Alkin is editor of Evaluation Roots: A Wider Perspective of Theorists' Views and Influences, a new book by SAGE with an interesting twist all its own - all royalties have been generously assigned to AEA. Thank you Marv for your thoughtfulness!

From the Publisher's Site:

"Evaluation Roots: A Wider Perspective of Theorists' Views and Influences, Second Edition, provides a contemporary examination of evaluation theories and traces their evolution. Marvin C. Alkin shows how theories are related to one another, especially in terms of how new theories build on existing ideas. The way in which these evaluation "roots" grow to form a tree helps provide readers with a better understanding of evaluation theory. In addition to the editor's overview, the book contains essays by leading evaluation theorists that describe the evaluators' personal theories and how they were developed."

New to the Second Edition:

- Twenty-four chapters are new or substantially revised, providing readers with fresh insights and updated reflections from the key theorists who have shaped the field.

- The book now includes international perspectives in chapters on evaluation theory in Europe, Australia, and New Zealand.

From the Author:

"The first edition of Evaluation Roots in 2004 grew out of a concern that there was no systematic examination of individual evaluation theoretical stances and the way in which they related to each other. This 2nd edition updates the listing of individual theorists from 22 to 25 and adds a consideration of theorists from Europe as well as from Australia/New Zealand."

"Most rewarding," he notes, "was the willingness of the most influential figures in evaluation to participate in this project. There is no other text that so completely examines the nature of evaluation theory, the relationship between theories, and provides original writings by all of these theorists."

About the Author:

Marvin C. Alkin, a professor emeritus at the University of California, Los Angeles, received his Ed.D. from Stanford University in 1964. His research revolves around evaluation utilization, evaluation theory, and the difficulties one faces when evaluating educational programs. His publications include Evaluation Essentials: From A to Z, The Costs of Evaluation, Debates on Evaluation, and Using Evaluations. For more information, visit http://gseis.ucla.edu/people/alkin.

Go to the Publisher's Site |

| When to Use What Research Design |

AEA members Dianne Gardner and Lynne Haeffele are co-authors of a new book, When to Use What Research Design, published by Guilford. AEA members Dianne Gardner and Lynne Haeffele are co-authors of a new book, When to Use What Research Design, published by Guilford.

From the Publisher's Site:

"Systematic, practical, and accessible, this is the first book to focus on finding the most defensible design for a particular research question. Thoughtful guidelines are provided for weighing the advantages and disadvantages of various methods, including qualitative, quantitative, and mixed methods designs. The book can be read sequentially or readers can dip into chapters on specific stages of research (basic design choices, selecting and sampling participants, addressing ethical issues) or data collection methods (surveys, interviews, experiments, observations, archival studies, and combined methods). Many chapter headings and subheadings are written as questions, helping readers quickly find the answers they need to make informed choices that will affect the later analysis and interpretation of their data."

Useful features include:

- Easy-to-navigate part and chapter structure.

- Engaging research examples from a variety of fields.

- End-of-chapter tables that summarize the main points covered.

- Detailed suggestions for further reading at the end of each chapter.

- Integration of data collection, sampling, and research ethics in one volume.

- A comprehensive glossary.

From the Authors:

"In our joint work as researchers, evaluators, and educators we often had lively discussions about research designs. The idea of working together on When to Use What Research Design emerged out of some those conversations about how to conduct our work. We found that we shared many beliefs about effective research methods, and our differences were complimentary. As researchers, we were weary of many of the usual methodological controversies, particularly the tired old Qualitative/Quantitative divide. Our approach is to ask research questions about real-world problems that require creativity and intellectual curiosity. The research questions guide the methodological choices, not commitment to particular designs, techniques, or epistemologies."

"As evaluators, we understand that our work must be useful to different audiences who have their own ideas about data and how to use it - whether it is coded as numbers, symbols, or words. That was our second shared insight. As educators, we know that the research choices can be difficult for novices or for colleagues hoping to work in a new area. What's often missing is advice about what methods to use that falls between the extremes of nuts-and-bolts instructions and discussions that are too theoretical to be practical. This book bridges this gap."

About the Authors:

The co-authors, along with colleague Paul Vogt, are based out of the Department of Educational Administration and Foundations at Illinois State University in Normal, Illinois.

Go to the Publisher's Site |

Volunteer Opportunities

| |

We have three groups starting their work at this time. Letters of interest for each of the three must be submitted on or before Wednesday, July 11.

- Ethics Working Group: Help to steer and guide the ethics work of the association

- Diversity Working Group: Help to steer and guide the diversity work of the association

- Student and New Evaluators Advisory Team: Lend your voice to planning for the services and initiatives aimed at students and novices

Learn more about each option, and how to submit your name for consideration, on our volunteer opportunities page.

Go to AEA's Volunteer Opportunities Page

|

New Member Referrals & Kudos - You Are the Heart and Soul of AEA!

| | As of January 1, 2012, we began asking as part of the AEA new member application how each person heard about the association. It's no surprise that the most frequently offered response is from friends or colleagues. You, our wonderful members, are the heart and soul of AEA and we can't thank you enough for spreading the word.

Thank you to those whose actions encouraged others to join AEA in April. The following people were listed explicitly on new member application forms:

Brad Astbury * Sally Bond * Carlos Bravo * Diane Burkholder * Kristine Chadwick * Cheyanne Church * Kathleen Douglas England * Paula Frew * Charles Gasper * Sarah Gill * June Gothberg * Christina Groark * Meg Hargreaves * Liza Kasmars * Colleen Kidney * Birgitta Larseen * Jennifer Lefing * Hillary Loeb * Colleen Lucas * Pat McArdle * Katherine McDonald * Courtney Malloy * Carrie Markovitz * Julia Melkers * David Merves * Jose Luis Palma * Cheryl Poth * John Putz * Lisa Rajigah * Elisabeth Ris * Teri Schmidt * Charles Smith * Flora Stephansen * Kenzie Strong * Murari Suvedi * Dan Violetta * Paul Winters

|

|

Evaluation Humor - School's Out for Summer! |

Enjoy your summer everyone! And our thanks to Chris Lysy for his cartoon contribution. If you have something you'd like to share, feel free to forward to our Newsletter Editor, Gwen Newman, at gwen@eval.org. |

|

New Jobs & RFPs from AEA's Career Center | |

What's new this month in the AEA Online Career Center? The following positions have been added recently:

- Director: Clinical Faculty Position at New York University (New York, NY, USA)

- Health Information Systems Implementation Specialist at I-TECH (Seattle, WA, USA)

- Research Associate/Evaluator at Office of Educational Innovation & Evaluation (OEIE) at Kansas State University (Manhattan, KS, USA)

- Senior Evaluation Specialist: Biodiversity and Conservation Project at Devex (Washington, DC, USA)

- Assessment Analyst/Advisor at University of California San Diego (San Diego, CA, USA)

- Senior Monitoring and Evaluation Advisor at Global Health Fellows Program II (Washington, DC, USA)

- Program Officer at Robert Wood Johnson Foundation (Princeton, NJ, USA)

- Post-doctoral Education Fellowship at Cleveland Clinic Lerner College of Medicine (Cleveland, OH, USA)

- Project Manager, Market Research and Evaluation at NEEA (Portland, OR, USA)

- Qualitative Research Manager at Louisiana Public Health Institute (New Orleans, LA, USA)

Descriptions for each of these positions, and many others, are available in AEA's Online Career Center. According to Google analytics, the Career Center received more than 4,000 unique visitors over the last 30 days. Job hunting? The Career Center is an outstanding resource for posting your resume or position, or for finding your next employer, contractor or employee. You can also sign up to receive notifications of new position postings via email or RSS feed.

|

| About Us | | The American Evaluation Association is an international professional association of evaluators devoted to the application and exploration of evaluation in all its forms.

The American Evaluation Association's mission is to:

- Improve evaluation practices and methods

- Increase evaluation use

- Promote evaluation as a profession and

- Support the contribution of evaluation to the generation of theory and knowledge about effective human action.

phone: 1-508-748-3326 or 1-888-232-2275

|

|

|

|

|

|

|

|

|