|

|

| Newsletter: February 2012 | Vol 12, Issue 2

|

|

|

|

|

|

A Word from the AEA President - Priority Setting

|

Greetings AEA colleagues,

Beginning with our Winter Board meeting in Minneapolis earlier this month, it has been an incredibly busy couple of months for the Board as we set priorities for the Association this year. Among them include deciding on implementing a post-Priority Area Team (PAT) structure, responding to learnings from the policy based governance transition evaluation report, and carrying on the work of the International Listening Project this year. In all three instances, we expect to share more with you in ensuing weeks as these important priorities take effect soon.

At the winter meeting, we had the opportunity to hear from our Minnesota affiliate representation, specifically Leah Goldstein, who brought greetings and excitement about what is shaping out to be a huge team effort from local arrangements chair, Jean King, and a host of other volunteers. Each month from hereinafter, I will use this newsletter to feature some aspect of the conference that will give you an idea of what to expect at the conference, 22-27 October, 2012.

With the Call for Proposals due on Friday, 16 March, consider this your invitation to design a session that directly speaks to our conference theme, "Evaluation in Complex Ecologies: Relationships, Responsibilities, Relevance."

2012 Sessions focusing on the conference theme are designed to posit and promote innovative thinking regarding where and how our current evaluation practices/approaches can inform improved social outcomes in increasingly complex ecologies. We hope to elevate our thinking on the importance of relationships, responsibilities, and relevance in the context of the ecological complexity of our communities, regions, country and world. We place high importance on 'innovative presentations' that will assist evaluation practitioners to:

- Better understand the different dimensions of the ecologically diverse environment in which we practice and its effect on data 'objectivity' as a potential driver of program and policy decisions;

- Define the opportunities, challenges and drivers of social and economic disparities and assess the current capacity of evaluation practitioners to effectively address them;

- Expand our awareness and use of research methodologies and literature, particularly in fields that are seldom included in evaluation thinking; and

- Engage the AEA community in ways that actively encourage their participation as knowledgeable social science professionals committed to societal betterment.

To submit a session focusing on the conference theme - or on any aspect of the breadth and depth of the field of evaluation - please go to the Evaluation 2012 conference website. All proposals should be submitted for review by one of AEA's Topical Interest Groups and the TIGs will forward on the very best sessions focusing on Evaluation in Complex Ecologies for consideration for inclusion in the Presidential Strand.

With all good wishes as we prepare for what AEA hopes will be an exciting conference in the fall,

Rodney

Rodney Hopson

AEA President 2012

|

|

|

|

|

Policy Watch - New Developments in Dept of State Program Evaluation Policy

| |

From Susan Kistler, AEA Executive Director

On February 23, 2012, the United States Department of State issued an updated 2012 Program Evaluation Policy that replaces its May 12, 2011 Policy. This policy applies to all State Bureaus as well as the State Department's Office that oversees the U.S. President's Emergency Plan for AIDS Relief (PEPFAR).

The 2012 Department policy explicitly references and notes its alignment with USAID's evaluation policy, building on a foundation established in 2011. Senior AEA Evaluation Policy Task Force (EPTF) Policy Advisor George Grob noted in a November 2011 AEA Policy Watch column that the authors of the 2011 USAID policy publicly acknowledged the influence of AEA's Evaluation Roadmap. Both the USAID and Department of State policies come on the heels of three years of focused outreach on the part of the EPTF.

The 2012 State Department Evaluation Policy expands on the 2011 policy guidance. Most notably, there is a new section added on Methodological Rigor. I have included it here in its entirety, due to the significance of the change:

Methodological Rigor: Evaluations should be "evidence based," meaning that they should be based on verifiable data and information that have been gathered using the standards of professional evaluation organizations. Such data can be both qualitative and quantitative. Bureaus can use a wide variety of evaluation designs such as case studies, experimental and quasi experimental designs as well as data collection methods such as key informant interviews, surveys, direct observation or focus group discussions. Regardless, such data must pass the tests of reliability and validity which are different for qualitative and quantitative data.

This section explicitly references adherence to professional evaluation organization standards. Along with the statement's valuing of a variety of methodologies and designs and of both qualitative and quantitative data, these are significant additions. These changes echo the stance urged in the Roadmap and by AEA's EPTF representatives, as do several other of the revisions to this evaluation policy.

Other notable additions to the 2012 State Department Policy include:

- An extended section devoted to providing definitions of key terms that draws a clear distinction between monitoring and evaluation;

- An explication of the value of evaluation for both accountability and learning purposes, an important change that both emphasizes the role of evaluation in program improvement, decision-making, and transparency in government, and shows more sophistication about the different purposes and perspectives of evaluation than is typically seen in agencies;

- A requirement "to evaluate two to four projects/programs/activities over the 24-month period beginning FY2012;"

- A strengthening of the language around independence and integrity that notes the problems and conflicts inherent in situations when evaluators are supervised by the manager of the program being evaluated; and

- An extension of the guidance as to the location in the Department of responsibilities for funding, implementation and oversight of evaluation, as well as for technical support and capacity building that links evaluation to ongoing management of programs, projects, and activities.

The EPTF is pleased to see this policy progression and the strong example that it sets for those working across the federal sector.

Go to the EPTF Website page

|

AEA's Values - Walking the Talk with Victor Kuo

| |

Are you familiar with AEA's values statement? What do these values mean to you in your service to AEA and in your own professional work? Each month, we'll be asking a member of the AEA community to contribute her or his own reflections on the association's values.

AEA's Values Statement

The American Evaluation Association values excellence in evaluation practice, utilization of evaluation findings, and inclusion and diversity in the evaluation community.

i. We value high quality, ethically defensible, culturally responsive evaluation practices that lead to effective and humane organizations and ultimately to the enhancement of the public good.

ii. We value high quality, ethically defensible, culturally responsive evaluation practices that contribute to decision-making processes, program improvement, and policy formulation.

iii. We value a global and international evaluation community and understanding of evaluation practices.

iv. We value the continual development of evaluation professionals and the development of evaluators from under-represented groups.

v. We value inclusiveness and diversity, welcoming members at any point in their career, from any context, and representing a range of thought and approaches.

vi. We value efficient, effective, responsive, transparent, and socially responsible association operations.

Hi. I'm Victor Kuo, currently a Senior Consultant in Strategic Learning and Evaluation at FSG, an international consulting firm dedicated to discovering better ways to solve social problems. I also serve on the AEA Board of Directors and have been an AEA member for more than 10 years. I'm delighted to contribute to AEA's ongoing commitment to promoting and putting into practice values of excellence, utilization, and diversity and inclusion in evaluation. Hi. I'm Victor Kuo, currently a Senior Consultant in Strategic Learning and Evaluation at FSG, an international consulting firm dedicated to discovering better ways to solve social problems. I also serve on the AEA Board of Directors and have been an AEA member for more than 10 years. I'm delighted to contribute to AEA's ongoing commitment to promoting and putting into practice values of excellence, utilization, and diversity and inclusion in evaluation.

As one of your current board members, I have the privilege of helping guide the development of the association's policies and strategic directions. One of the association's current efforts is to explore the association's role in the international community of evaluators, evaluation users, and the general public.Last year, a task force assembled by the board initiated the AEA International Listening Project, and with the assistance of AEA members, we sought member input through surveys, interviews, and a Think Tank session at Evaluation 2011 in Anaheim. The project was grounded in all of AEA's stated values, its mission, and its current goals; in particular, the project drew its inspiration from AEA's valuing of a global and international evaluation community and how evaluation is practiced in diverse contexts. Every day, it becomes more apparent in the news, commerce, politics, technology, and entertainment that my life in Seattle is increasingly dependent on what happens in Beijing, Dubai, and Frankfurt.Inevitably, our community of evaluators finds opportunities to use our skills and perspectives to positively impact social and environmental change globally. We also encounter opportunities to connect with and learn from our colleagues worldwide. The change we seek must be guided by core values that recognize and respect diverse perspectives and that promote ethical and socially responsible human and collective behavior. As we embark on our journey together in 2012, with hopes for a more harmonious and peaceful world, we invite your feedback and comment on how AEA can enhance its role in and relationship with an international community of evaluators and evaluation users. Stay tuned for more details. I look forward to engaging with you!

|

Face of AEA - Meet Michelle Kobayashi, Independent Consultant

| AEA's more than 7,000 members worldwide represent a range of backgrounds, specialties and interest areas. Join us as we profile a different member each month via a short Question and Answer exchange. This month's profile spotlights Michelle Kobayashi, an independent consultant who will be leading an upcoming eStudy workshop on developing effective surveys.

Name, Affiliation: Michelle Miller Kobayashi, Vice President at

National Research Center, Inc. in Boulder, Colorado

Degrees: BA in Psychology (Bethel College), MSPH (University of Colorado Health Sciences Center)

Years in the Evaluation Field: Since 1989 (23 years)

Joined AEA: 2008

AEA Leadership Includes: Coffee Break/eStudy Presenter and half and full-day workshop presenter

Why do you belong to AEA?

"I spent many years of my career knowing few people who did what I did. When I tell people I am an evaluator, most give me a blank stare. Quite frankly, most of my family and friends don't really understand what I do. AEA is a way to find, connect and learn from others who choose our same unique work."

Why do you choose to work in the field of evaluation?

"I love evaluation work because my interests are varied and great. Evaluation allows me to specialize in methodologies rather than content so I can move from topic to topic -- continually learning. I also enjoy the ability to apply my knowledge across domains. I find many of my projects (and recommendation sections) share very common themes - even with seemingly unrelated content. I think evaluators have a very influential role in shaping programs because we often approach a topic with a broader and more systematic approach."

What's the most memorable or meaningful evaluation that you have been a part of - and why?

"I have been fortunate in that my job has allowed me to participate in hundreds of evaluations. I have studied topics including positive youth development, K-12 education, community food security, government performance, alternative transportation, successful aging, obesity prevention, palliative care, alcohol and drug abuse, rural health care, interpersonal violence, urban design, racial bias, organizational capacity building, technology integration and more.

"It is difficult to say which is most meaningful although an area that is always near and dear to my heart is community livability. This is not necessarily a single evaluation or assessment but a theme that seems to find its way into much of my work. I love system-level approaches to solving community problems - and community livability is often part of the puzzle.

"I also enjoy projects that involve the development and use of evaluation toolkits. I like system level solutions to all parts of my work."

What advice would you give to those new to the field?

"Read your textbooks on methodology and statistics, but also understand that we work in the real world. The days of gold-standard methods will likely be gone once you leave academia. In our profession with small budgets, rushed timelines and a need for immediate actionable data - we focus on optimal rather than perfect research. It takes more creativity and knowledge to design "optimal" so you need to be prepared and courageous."

If you know someone who represents The Face of AEA, send recommendations to AEA's Communications Director, Gwen Newman, at gwen@eval.org.

|

New Website Gives Inside Look at Evaluation in Philanthropy

| |

How do evaluations really unfold in philanthropy? A brand new website provides a rarely seen, insider look through assorted case studies, articles, papers, reports and other rich resources. Though the online Evaluation Roundtable repository made its debut just this month, the initiative's origin dates back nearly 25 years. How do evaluations really unfold in philanthropy? A brand new website provides a rarely seen, insider look through assorted case studies, articles, papers, reports and other rich resources. Though the online Evaluation Roundtable repository made its debut just this month, the initiative's origin dates back nearly 25 years.

The Evaluation Roundtable began in 1988 when only a handful of foundations had staff focused on evaluation. Patti Patrizi, then head of evaluation at The Pew Charitable Trusts, gathered the few other evaluation staff at foundations with the goal of sharing experiences and in essence establishing the role and practice of evaluation within foundations. Patrizi is now principal at her consulting firm Patrizi Associates and serves as a Senior Fellow at Public/ Private Ventures in Philadelphia.

"The best way I can think about the role of evaluation directors in these positions is that they serve as interlocutors between program leaders and evaluators, covering the range of political, organizational, technical and program issues," says Patrizi, who serves as chair of the Roundtable. "We were setting up the terms so that the relationship could flourish."

The Evaluation Roundtable soon grew and today evaluation and program staff from up to 30 foundations attend by-invitation-only gatherings, where they often discuss a case study that candidly documents a major evaluation or original "benchmarking" research on foundation effectiveness. Participants, who are almost exclusively foundation staff, at these closed sessions can honestly discuss the difficulties of their job and grapple with common issues, Patrizi says.

The Evaluation Roundtable website, officially launched in February 2012, contains case studies discussed at these meetings as well as benchmarking research and other materials not made public. The case studies provide an inside and unvarnished glimpse into the workings of philanthropy and the decisions that foundations make, and many focus on managing change or conflict on serious evaluation issues.

"The evaluation of the Robert Wood Johnson Foundation's Fighting Back program, which was an $88 million initiative to harness community strategies to reduce the use and abuse of alcohol and illegal drugs, is a terrific case in terms of understanding the core practice of evaluation where you have to understand goals, manage conflict, and determine what is important information," Patrizi says. "It's a great illustration of a fundamental disagreement between program designers and evaluators about what the proper unit of analysis was and should have been."

Patrizi said that she hopes the new Evaluation Roundtable website will be a resource for people teaching evaluation as well as those interested in learning more about foundation strategy and decision making.She has seen an immediate interest in the website from those attending the Roundtable, with many citing plans to use the case material in staff development programs.

Go to the Evaluation Roundtable Website |

Meet 2012 Presidential Strand Co-Chairs

| |

Meet 2012 Presidential Strand Co-Chairs Fiona Cram and Ricardo Millett. They have the distinct honor of conceptualizing this year's conference theme, Evaluation in Complex Ecologies: Relationships, Responsibilities, Relevance, as selected by AEA's 2012 President Rodney Hopson - and then inviting keynote speakers to headline the event and planning special sessions that run throughout. Meet 2012 Presidential Strand Co-Chairs Fiona Cram and Ricardo Millett. They have the distinct honor of conceptualizing this year's conference theme, Evaluation in Complex Ecologies: Relationships, Responsibilities, Relevance, as selected by AEA's 2012 President Rodney Hopson - and then inviting keynote speakers to headline the event and planning special sessions that run throughout.

Ricardo brings more than 40 years of experience in program evaluation, is a Principal Associate at Community Science, a Maryland-based research and development organization that works with governments, foundations and non-profits and owner of Millett & Associates. Fiona is a lifelong New Zealander, as well as a mother and evaluator who currently serves as Director of Katoa Ltd, a research and evaluation company. She has a PhD from the University of Otago in Social and Developmental Psychology and her interests include health and wellness, research and evaluation methods and ethics, organizational capacity development, and new technologies.

"I feel privileged to serve as a co-chair for the Presidential Strand and I welcome the opportunity to help shape the selection of strand sessions that will inspire and inform AEA audiences and members on issues of interest and importance to making evaluation useful to the evolution of a more just and equitable society," says Ricardo.

Adds Fiona: "I am thrilled to be involved in next year's conference, both to support Rodney and to be immersed in how people respond to his conference theme. We only have to think about our own families to realize that we are participants in complex ecologies on a day-to-day basis. We now have an invitation to reflect on what this means for evaluation practice and to make explicit some of the factors that might otherwise sit below the surface of the work we do.

"For me the notion that the world is about relationships is not new. Indigenous peoples are connected by a web of genealogical relationships, both with each other and with their environment. It is our responsibility to these relationships that infuses our evaluation practice. As an evaluator my response is often to highlight the complexities of the circumstances in which people find themselves. Evaluations under these circumstances need to represent people's circumstances, efforts and successes well so that evaluative findings are relevant for all stakeholders.

"For me, both personally and professionally, I hope the 2012 conference is a time to share with, and learn from, my colleagues about how they are thinking about these issues. The world is changing and as evaluators we need to be relevant. Part of this is being able to dialogue about, and participate in complex ecologies."

To learn more about this year's Evaluation 2012 conference to be held October 24-27 with workshops before and after in Minneapolis, Minnesota, click here. The deadline for this year's Call for Proposals is Friday, March 16. To touch base with Fiona or Ricardo personally, you can send emails to fionac@katoa.net.nz or ricardo@ricardomillett.com. They welcome your ideas and suggestions and we look forward to hearing from you and seeing you at this year's event!

|

TechTalk - What is Cloud Computing?

| From LaMarcus Bolton, AEA Technology Director

You may be wondering - what is this nebulous "cloud" you have heard more chatter about recently? "The cloud" refers to cloud computing, which provides online storage services to users. The service gives users easy accessibility to various data such as photos, documents, presentations, spreadsheets, videos, music, and a variety of other file types. The possibilities are endless! The best part of it all is that all of this data is stored on a secure remote server. This means that you do not necessarily need to fill up your precious hard drive space; all you need is a computer and Internet access!

How can "the cloud" be of use to you as an evaluator? Well, have you ever been to a conference only to realize that you forgot to save the latest version of your presentation on your flash drive? Or worse, amongst all the craziness of traveling, have you ever forgotten your presentation altogether? This could be a complete nightmare! With cloud computing, you can instantly access your files - despite where you are - directly via the Internet. With cloud computing, you will always have a virtual backup of your files in the event of damage to the original file, or as in this case, simple forgetfulness.

Have you ever had three or more colleagues working on one evaluation project at the same time? It can quickly become a hassle sending emails back and forth, especially if all of the individuals are making edits simultaneously; there may be multiple versions of the same document floating around and no one may know what the latest update is. Cloud computing makes simultaneous collaboration easy.

At AEA HQ, we use cloud computing on a daily basis. For example, as noted in our 25 Low-cost/No-cost Tools for Evaluation session, Google Docs is a staple part of our workflow. It allows us to share and collaborate easily and in real-time between staff using its online office suite. We also use services, like Dropbox, which provide automatic synchronizing of our computers' files. These files are then accessible through both the web and your mobile device.

But we perhaps should also bring up the downsides. To access the cloud, you must have Internet access - occasionally a challenge in some venues (including at the AEA conference), and on planes, etc. Also, since the materials are in the cloud, on a third-party server, they also are not on your private hard disk - so read carefully before using any cloud service to ensure that their security and privacy settings are acceptable for your work context.

This article just scratches the surface on what evaluators can do and how they can get started with cloud computing. Please feel free to share how you use it with us as well. Also, if you have any questions about how to jump on the cloud computing bandwagon, please do not hesitate to contact me at marcus@eval.org.

The opinions above are my own and do not represent the official position of AEA nor are they an endorsement by the association.

|

Looking for Materials from AEA's 2011 Conference in Anaheim, California?

| |

Hello friends! My name is Kate Golden and I am one of AEA's eLibrary curators. Did you know that our eLibrary contains more than 255 items from our 2011 Evaluation Conference? Presenters uploaded slides, papers, posters, and reports to share their far-ranging experiences and expertise with you! Three of my favorites (in no particular order) include: Hello friends! My name is Kate Golden and I am one of AEA's eLibrary curators. Did you know that our eLibrary contains more than 255 items from our 2011 Evaluation Conference? Presenters uploaded slides, papers, posters, and reports to share their far-ranging experiences and expertise with you! Three of my favorites (in no particular order) include:

Did you also know that we had over 1000 presenters at the 2011 Conference? That is a lot of knowledge, expertise, and materials that is NOT represented in the repository. If you are a past presenter, please consider adding your work to the eLibrary. You are welcome to upload PDF files, Word documents, or other common types of files.

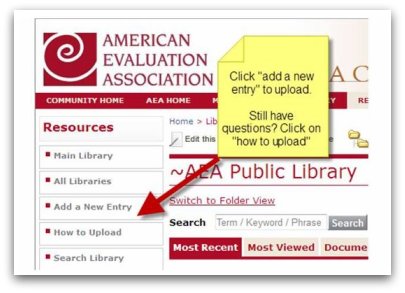

Here are three steps to upload your materials:

- Log on to AEA's eLibrary using your AEA Username and Password (forgot your username or password? Go here and enter the email you have on file with AEA to have them sent to you).

- If you are a pro, click on "add a new entry" on the left hand side of your screen.

- If you'd like some coaching, click on "how to upload" for a very cool short video that will walk you through this process.

That's it. If you run into challenges, I can help! Send me an email at kate@eval.org.

|

Methodological Approaches to Community-Based Research

| |

AEA member David S. Glenwick is co-editor of Methodological Approaches to Community-Based Research, a new book published by the American Psychological Association. AEA member David S. Glenwick is co-editor of Methodological Approaches to Community-Based Research, a new book published by the American Psychological Association.

From the Publisher's Site:

"Methodological Approaches to Community-Based Research offers innovative research tools that are most effective for understanding social problems in general and change in complex person-environment systems at the community level. Methodological pluralism and mixed-methods research are the overarching themes in this groundbreaking edited volume, as contributors explain cutting-edge research methodologies that analyze data in special groupings, over time, or within various contexts. As such, the methodologies presented here are holistic and culturally valid, and support contextually grounded community interventions.

"This volume features web appendices that include a variety of research applications (e.g., SPSS, SAS, GIS) and guidelines for the accompanying data sets. The extensive illustrations and case studies in Methodological Approaches will give readers a comprehensive understanding of community-level phenomena and a rich appreciation for the way collaboration across behavioral science disciplines leads to more effective community-based interventions."

From the Editors:

"Recent decades have seen a dramatic increase in the number of professionals, including program evaluators, involved in community-based research," says Glenwick. "However, the methodologies used in such activities have not kept pace with the development of theory and techniques pertaining to data collection and analysis. Unfortunately, few research methods textbooks take an applied focus, and none have had as their focus cutting-edge methodologies for analyzing community-level data or included practical step-by-step illustrations of how to carry out such analyses. Therefore, the current volume was created in order to present a range of innovative methodologies relevant to community-based research, as well as examples of the applicability of these methodologies to specific social problems. The individual chapters provide an introduction to the theory behind, the procedures involved in, and the applicability of each of the nine methodologies presented. Taken together, the chapters highlight the complexity of community-based phenomena and the benefits of analyzing them from multiple perspectives involving the grouping of data (e.g., cluster analysis, meta-analysis), change over time (e.g., time series analysis, survival analysis), and contextual factors (e.g., multilevel modeling, epidemiologic approaches, geographic information systems)."

About the Editors:

The volume was co-edited by Leonard A. Jason and David S. Glenwick. Dave is a professor of psychology at Fordham University, where he is a former director of the graduate program in clinical psychology and associate chair for graduate studies. His consultation activities have involved preschool, special education, and extended care facilities, among others. He is a fellow of seven divisions of the American Psychological Association and has been an AEA member since 1985.

Go to the Publisher's Site

|

OPEG Event May 18 - Communicating Results Effectively

| |

The Ohio Program Evaluators' Group will hold its annual Spring Exchange on Friday, May 18, at the Quest Center, 8405 Pulsar Place, Columbus, Ohio from 9 AM - 4 PM. The 2012 theme is Communicating Results Effectively: Making Our Findings Stick. The Ohio Program Evaluators' Group will hold its annual Spring Exchange on Friday, May 18, at the Quest Center, 8405 Pulsar Place, Columbus, Ohio from 9 AM - 4 PM. The 2012 theme is Communicating Results Effectively: Making Our Findings Stick.

This year's invited keynote speaker is John Nash, associate professor in Educational Leadership Studies at the University of Kentucky and Director of the Laboratory on Design Thinking in Education (dLab). He is a current board member of the Evaluation Network for the Missouri River Basin, the program chair for the American Evaluation Association's (AEA) newest topical interest group, Data Visualization and Reporting, and the former program chair for AEA's Nonprofit and Foundation Evaluation group. Learn more about John Nash on LinkedIn or by viewing his AEA coffee break webinar.

A Call for Proposals is open through Friday, March 9. Details on types, length, and focus of presentations can be viewed online. In addition to presentations by evaluation colleagues, one session will include a panel of policy/decision makers who will discuss aspects of evaluation communications that have been influential and impactful. The session will include an opportunity for the audience to ask the panelists questions. The event will also feature an Ignite session.

For more information about the conference, to register, or to submit a proposal for a presentation, visit the OPEG website. A detailed agenda for the day will be available online in late March.

Go to the OPEG website

|

Data Den: Twitter, Hashtags and NodeXL - Find Us & Follow Us!

| Welcome to the Data Den. Sit back, relax, and enjoy as we deliver regular servings of data deliciousness. We're hoping to increase transparency, demonstrate ways of displaying data, encourage action based on the data set, and improve access to information for our members and decision-makers. This month, we're looking at AEA's twitter network and the use of NodeXL for social network analysis.

You may not even have known that AEA is on twitter, but we may be found @aeaweb. Twitter accounts are referenced by the '@' sign followed by the account username. If you aren't on twitter, go ahead and click through on the username links - you won't be signed up for anything, but will get a feeling for the type of exchange. @aeaweb has over 1500 followers and regularly shares information about evaluation resources and discussions from multiple sources, funding and grant opportunities, and updates about AEA events and services. If you subscribe to AEA's weekly Headlines and Resources list, or to our listserv EVALTALK, you have seen a cleaned up version of AEA's twitter postings, collected and shared each Sunday.

In their native form, most Twitter posts include hashtags. Hashtags are a sort of embedded keyword tagging so that those with a common interest may find one another and discuss common topics. Those discussing evaluation, usually use "#eval." Examining the users of a hashtag can help us understand better the actors within that social network.

The network map below shows the connections among the users of the #eval hashtag. The vertices, or points of intersection where you see little icons, each represent someone with a twitter account and are sized by the number of followers - the larger the size, the more people following the account. The edges, or connections, show relationships among the users. AEA is at the heart of this network, represented by the AEA logo icon, but there are lots of other key actors and I have highlighted a few. To the left of AEA you can find the World Bank's Independent Evaluation Group @WorldBank_IEG, a regular contributor to the discussion in multiple languages. Just below AEA, you'll find Pablo Rodriguez @txtPablo who focuses on development and complexity from Argentina and AEA member Katherine Dawes @KD_eval who works at the US Environmental Protection Agency. While both have fewer followers than AEA or the IEG, they are core influencers in the network, at the heart of sharing and discussions. Further afield, we find AEA members Tom Kelly @tomeval from the Annie E. Casey Foundation and John Nash @jnash who focuses on design, technology, and innovation (and evaluation!). Tom and John both have large followerships of their own and multiple relationships that cross boundaries.

This network map was created using NodeXL, a free add on to Microsoft Excel. We're using NodeXl to help us track changes in our network over time and to identify key colleagues influencing that network. We'd like to thank Marc Smith @marc_smith from the Social Media Research Foundation for his assistance in creating and interpreting the map.

Want to learn more? AEA members Johanna Morariu @j_morariu, Shelly Engelman, and Tom McKlin have recently talked about their use of NodeXL on aea365, and AEA's Social Network Analysis TIG explores a range of SNA issues, tools, and uses.

|

Volunteer to Serve as a Reviewer for Evaluation 2012 Proposals

| |

AEA will receive over 1000 proposals for our annual conference, Evaluation 2012, to be held in Minneapolis, Minnesota, this coming October. Each proposal is reviewed by at least two reviewers and the review process is overseen by AEA's 40+ Topical Interest Groups. Proposal review is done during the month of April and involves reading and rating a set of proposals within a particular topical area.

The reviewers perform a vital function in helping the association to pick quality content for our premiere event.

If you would like to be considered to be a reviewer, there are two ways to sign up:

1. If you are submitting a conference proposal, sign up to be added to the potential reviewer list right on the proposal submission form. 2. If you are NOT submitting a proposal, please review the list of TIGs and then complete the short form at http://www.eval.org/ProposalReviewer.asp. Please note that each TIG selects its own reviewing team.

By signing up to indicate your interest in reviewing, your name will be forwarded on to the TIG program chair but you may or may not be chosen as a reviewer. You will be contacted by the TIG leaders directly with further information and details about reviewing for that TIG if they wish to seek your assistance. Serving as a reviewer is a wonderful way to be or get involved in the life of AEA!

|

New Member Referrals & Kudos - You Are the Heart and Soul of AEA!

| | As of January 1, 2012, we began asking as part of the AEA new member application how each person heard about the association. It's no surprise that the most frequently offered response is from friends or colleagues. You, our wonderful members, are the heart and soul of AEA and we can't thank you enough for spreading the word.

Thank you to those whose actions encouraged others to join AEA in January. The following people were listed explicitly on new member application forms:

Gail Barrington * Joseph Bauer * Ayesha Boyce * Christopher Cameron * Christina Christie * Cheyanne Church * Anne Clark * Peter Dahler-Larsen * David Devlin-Foltz * Lynn Gannon Falletta * Joy Frechtling * Quinn Gentry * Don Glass * Nancy Grudens-Schuck * Michelle Jay * Burke Johnson * Tara Kirkpatrick * Kelly Laurila * Laura Linnan * Linda Morra-Imas * Leah Neubauer * Kathryn Newcomer * Michael Newman * Tom Nochajski * Jane Peters * Joel Philp * Elena Polush * Sharon Rallis * Iris Smith * Valerie Underwood * Sharon Wasco * Gill Westhorp * Cynthia Williams * Jennifer Yourkavitch

|

|

New Jobs & RFPs from AEA's Career Center

| |

What's new this month in the AEA Online Career Center? The following positions have been added recently:

- Evaluation Specialist at School of Medicine at the University of Texas Health Science Center at San Antonio (San Antonio, TX, USA)

- Part Time Senior Evaluation Officer at Institute of International Evaluation (New York, NY, USA)

- Academic Research Consultants Wanted to Assist Graduate Students at The Dissertation Coach (Los Angeles, CA, USA)

- Sr Director of Research & Analytics at Colorado Health Institute (Denver, CO, USA)

- Director of Institutional Research at Palo Alto University (Palo Alto, CA, USA)

- Evaluation Data Analyst at Chicanos Por La Causa (Phoenix, AZ, USA)

- Senior Manager, Impact Strategies at YMCA of the USA (Chicago, IL, USA)

- Evaluation Expert at US Agency for International Development (Washington, DC, USA)

- Monitoring & Evaluation Statistician at Millenium Challenge Account Lesotho (Maseru, LESOTHO)

- Senior Evaluation Specialist at Asian Development Bank (Manila, PHILIPPINES)

Descriptions for each of these positions, and many others, are available in AEA's Online Career Center. According to Google analytics, the Career Center received more than 4,100 unique visitors over the last 30 days. Job hunting? The Career Center is an outstanding resource for posting your resume or position, or for finding your next employer, contractor or employee. You can also sign up to receive notifications of new position postings via email or RSS feed.

|

| About Us | | The American Evaluation Association is an international professional association of evaluators devoted to the application and exploration of evaluation in all its forms.

The American Evaluation Association's mission is to:

- Improve evaluation practices and methods

- Increase evaluation use

- Promote evaluation as a profession and

- Support the contribution of evaluation to the generation of theory and knowledge about effective human action.

phone: 1-508-748-3326 or 1-888-232-2275

|

|

|

|

|

|

|

|

|