|

|

| Newsletter: June 2011 | Vol 11, Issue 6

|

|

|

|

|

| Engaging Respectfully with Diversity |

Greetings Colleagues,

It is midsummer time in the northern hemisphere - a time to celebrate the light, the warmth, and the nurturance of the sun. I have the great privilege of spending this midsummer in Scandinavia. The sun barely sets each day, and many of the people barely sleep. And in these northern lands, midsummer itself is an important holiday time for gatherings of friends and family. The multiplicity of our world's climates, rituals, and celebrations - both between and within our cultures and countries - does indeed enrich our global society.

In parallel fashion, this multiplicity of perspective and experience also enriches the contexts in which evaluators work. Diversity is a hallmark of evaluation contexts, as all contexts have a diversity of evaluation stakeholders, from policy makers to program deliverers and to program participants. This diversity is anchored in the different program stances of different stakeholders, and is also invoked by stakeholders' distinct interests and values, backgrounds and cultures. Engaging respectfully with such diversity is an important challenge of evaluation practice, one that is directly related to this year's theme of values and valuing in evaluation.

To help meet this challenge respectfully and effectively, AEA now has a member-endorsed public statement on Cultural Competence in Evaluation. Sincere thanks and appreciation to all who contributed to this statement and to the membership who supported it! This statement not only provides guidance for (especially US-based) evaluators in engaging appropriately with cultural diversity in the evaluation process. It also "informs the public of AEA's expectations concerning [the role of] cultural competence in the conduct of evaluation." This role includes fulfilling an ethical commitment to fairness and equity in evaluation for all stakeholders and generating valid and trustworthy evaluation inferences. Further, "in a diverse and complex society, cultural competence is central to making a difference."

May your midsummers (and midwinters for our southern hemisphere colleagues) offer time with people and places that matter to you, and a bit of a time out from the busy-ness of daily life.

Best,

Jennifer

Jennifer Greene

AEA President, 2011

jcgreene@illinois.edu

|

|

|

|

|

Policy Watch - Rounding Out U.S. International Evaluation Policy

|

From George Grob, Consultant to the Evaluation Policy Task Force

In April, we described a major breakthrough in U.S. evaluation policy for international development. The U.S. Agency for International Development (USAID) had announced a new evaluation policy to expand and improve the conduct and use of evaluation as an integral part of USAID's foreign assistance programs.

On May 12, another shoe dropped. The State Department issued an even broader, overarching policy covering all of its programs, including diplomacy as well as foreign assistance. Its importance is highlighted in the opening paragraph:

"The policy supports the Department's goal of connecting evaluation, an essential function of effective performance management, to its investments in diplomacy and development to ensure they align with the agency's overarching strategic goals and objectives."

Important features of this policy include:

- requiring that all major programs be evaluated at least once;

- requiring evaluation plans to be prepared annually by each bureau that includes: a list of projects and programs and the strategic goal(s) that the projects and programs support; a status report on current evaluation efforts and resources and on recently completed evaluations; a plan for conducting new evaluations; and a discussion of proposed use and dissemination;

- recognizing the need for and requiring arrangements for the funding of evaluation. (The policy notes that "the cost of an evaluation will vary by program, and no set amount is prescribed, although industry averages suggest that 3-5% of the program cost is a reasonable baseline.");

- linking evaluations to strategic and program planning;

- making the Chief Performance Officer responsible for reviewing Bureau evaluation plans;

- recognizing the importance of both internal and independent evaluations;

- recognizing the importance of evaluation for new program requests;

- maintaining an archive of completed evaluations; and

- encouraging posting of evaluation reports and results on the Department's intranet.

I describe this new policy as "another" rather than "the other" shoe dropping since U.S. international programs are not limited to the State department (including USAID). Significant international policy and programs are formulated and carried out by other departments and agencies, including but not limited to the Department of Agriculture, Health and Human Services, Defense, and Labor. U.S. international evaluation policy will not be fully developed until these other organizations make evaluation an essential feature of their programs as well. This is already beginning to happen in the President's Emergency Program for AIDS Relief (PEPFAR) and in the Centers for Disease Control and Prevention's programs for malaria and tuberculosis. A more recent development is a growing interest in the use of evaluation in international food programs of both USAID and the Department of Agriculture. Evaluation, including the relevance of AEA's Evaluation Roadmap, is being considered for discussion at an upcoming International Food Assistance Development Conference in Kansas City, Missouri also this month.

AEA has been on the forefront in advancing evaluation policy in U.S. international programs, and we are pleased to see ideas such as those expressed in AEA's Evaluation Roadmap as well as by our colleagues in the various Federal departments and agencies take root.

Go to AEA's Evaluation Policy Task Force website page

|

AEA Member Leader Retreat

| |

From Beverly Parsons, Co-Chair, AEA's Retreat Planning Team and Executive Director, InSites

More than 40 AEA member leaders gathered in Atlanta on June 15-16 to identify principles, structures, and processes for how members can be meaningfully engaged in the board's governance responsibilities with a special focus on its responsibility to evaluate AEA's policies, programs, and activities. More than 40 AEA member leaders gathered in Atlanta on June 15-16 to identify principles, structures, and processes for how members can be meaningfully engaged in the board's governance responsibilities with a special focus on its responsibility to evaluate AEA's policies, programs, and activities.

The group consisted of the current Priority Area Teams (PATs), the board, and several other member leaders identified by president-elect Rodney Hopson. The PATs are an interim mechanism whereby members can actively provide input and guidance on issues before the Board as it proceeds forward under a policy-based governance structure, as approved by the board in 2008.

As AEA President Jennifer Greene pointed out at the beginning of the retreat: "Some of us are enthusiastic about the potential of policy-based governance to enable AEA to be more strategically present in the world outside our association, helping us be advocates and champions for evaluation in this world. And some of us are skeptical about losing cherished parts of our identity and our history amidst a preoccupation with policy and governance."

Through a set of structured group activities, retreat participants deliberated the character of meaningful member engagement and brainstormed various structures through which members could engage in evaluation of AEA's policies, programs, and activities. Participants actively, creatively, and thoughtfully offered their wisdom and experiences to these challenges and contributed in proactive and integrative ways. Specifically, the retreat served to:

- Build respectful, appreciative relationships among the retreat participants, many of whom did not previously know one another.

- Harness the collective wisdom about the leadership and structures that have been used over the years as AEA has grown from a small informal group of evaluators to the more than 6,500 members of today.

- Better illuminate/address/outline how AEA's growth has led to changes in the roles and responsibilities of the board; the executive director and staff; and member committees and task forces.

- Build a shared knowledge of policy-based governance, through hands-on activities and practical experiences, as well as ponder the greater impact that the field of evaluation can have on policy governance more broadly.

- Generate principles for member engagement in policy-based governance activities that adhere to our values and that contribute to fulfillment of board responsibilities.

- Generate practical ideas about member engagement in evaluating policies related to the annual conference and the link between programs and AEA's stated goals.

- Put in place a follow up activity through which PAT members will review the functions of their current teams and determine what structures and processes would be most appropriate moving forward.

PAT members will take the next step in determining the structures and processes for future member engagement in AEA governance, by reviewing its functions and recommending ways that those functions can effectively be carried out within the framework of policy-based governance and the principles of member engagement generated at the retreat. A subgroup of PAT members will synthesize the recommendations of the PATs and provide the board with an overall recommendation about such structures and processes. The board hopes to consider these recommendations at its November board meeting.

If you'd like to participate in this process or comment, I invite you to contact me directly at bparsons@insites.org. We welcome your input.

|

Face of AEA - Meet Luisa Guillemard Gregory

| |

AEA's 6,500 members worldwide represent a range of backgrounds, specialties and interest areas. Join us as we profile a different member each month via a short Q&A. This month's profile spotlights Louisa Guillemard Gregory.

Name: Luisa Guillemard Gregory Name: Luisa Guillemard Gregory

Affiliation: Department of Social Sciences, Psychology Program, University of Puerto Rico, Mayaguez Campus (UPRM)

Degrees: M.S., Clinical Psychology, Ph.D. Educational Psychology

Years in the Evaluation Field: Since 2003

Year Joined AEA: 2008

Why do you belong to AEA?

"AEA is the appropriate professional forum for evaluators to share experiences, network, and gain knowledge about what is emerging in the field. I have found that AEA members stand out as professionals who communicate and share their findings in very practical ways. Every time I go to an AEA meeting, I always bring back many ideas that I can put into practice in the design of evaluations."

How did you become involved in evaluation?

"As has been the case for many psychologists who become evaluators, I became involved in conducting evaluations because of my experience in measurement and testing. Because of my expertise, I was recommended by a colleague to help a team of professors writing a research proposal who needed an evaluator to develop the assessment plan. I accepted this first challenge, and since then, have been invited by many other groups to design the assessment component of their research proposals. Another factor that encouraged my development in the field was the Self-Study Evaluation conducted for the Middle States Commission visit to our campus in 2005. For this visit, I served as the educational assessment coordinator, and subsequently, developed my department's educational assessment plan. Since my first evaluation in 2003, I have designed and conducted evaluations for projects funded by NSF, NOAA, USDA, and NIH, both in Puerto Rico and in the continental US. My plans are to develop an evaluation resource center in my campus to offer advice and training to researchers, evaluators, and non-profit organizations."

What is the most memorable or meaningful evaluation that you have been a part of - and why?

"The most meaningful evaluation that I have conducted was for the University of Delaware's Disaster Research Center (DRC) Research Experience for Undergraduates Program (NSF-REU). I was the evaluator of this program for six years after, until it came to an end this past summer. This evaluation was meaningful because of the respect for the evaluation process demonstrated by all the staff involved in the project, their openness for constructive criticism, their constant interest in improvement, and their willingness to implement recommendations. It is very rewarding to conduct an evaluation when the process is welcomed and understood for what it is - a constructive learning experience conducive to improvement."

What advice would you give to those new to the field?

"Network, go to conferences, institutes, and collaborate with others - this is a 'must.' I encourage new evaluators to become active in professional associations, such as AEA, and to take advantage of educational activities such as webinars and professional meetings."

If you know someone who represents The Face of AEA, send recommendations to AEA's Communications Director, Gwen Newman, at gwen@eval.org.

|

Meet Ben McClanahan - AEA's Web Coordinator

| |

Welcome to Ben McClanahan - AEA's newest staff member who offers his expertise to help our many TIG webmasters shine. It's a timely addition and a natural fit. Welcome to Ben McClanahan - AEA's newest staff member who offers his expertise to help our many TIG webmasters shine. It's a timely addition and a natural fit.

"I started working with AEA in March to help manage its TIG websites, which are built on a platform I work with extensively in my work at the International Association of Administrative Professionals (IAAP)," says McClanahan. "Both organizations use the Higher Logic web platform for its community websites, so it was a very natural fit for me to help AEA. The first priority," he adds, "was developing a website for TIG webmasters to use as a resource. This site includes tutorials, demonstrations, discussion threads and other documents to help TIG webmasters hit the ground running when they are working with a new site or when they are taking over for a previous webmaster. I also work directly with TIG webmasters when they have problems or questions about how to use their site. This usually involves email correspondence, phone calls and occasional live training sessions via conference call or webinar."

Ben also serves as Internet Communications Coordinator for IAAP, a professional organization for office professionals with about 25,000 members worldwide. "I work with about 250 different chapter webmasters on keeping their sites up-to-date and also assist the general membership on how to use our website. Prior to IAAP," he says, "I worked at Penton Media, a business-to-business publishing company, as an online content strategy manager. I am based out of Kansas City, Mo., and live with my wife and two boys."

Meanwhile, AEA is currently home to 46 Topical Interest Groups that represent a wide range of interests and claim a wide range of membership. About half maintain a dedicated website where members can find timely information and locate helpful resources. Bringing Ben aboard helps the TIGs maintain consistency across the board and continuity year to year.

"My primary role with each individual TIG is to provide initial webmaster training and then support throughout the year as they run into issues or have questions," says McClanahan. "Each year, the TIG webmasters change over, so we end up with new people working on the sites. What I'm mainly focusing on now is reviewing the sites and in some cases reaching out to TIG webmasters to ask if there's anything I can do to help them. I've also put together an extensive webmasters site that contains tutorials and how-to's in case they want step-by-step directions. It goes over things like changing page layouts, managing content, embedding content (YouTube, graphs, charts, etc.), so hopefully once our TIG webmasters become more comfortable with those features, we'll see more of them on our sites and the value of the TIG sites will increase. That is something AEA did not have before, so I'm hoping it becomes a valuable resource for TIG webmasters."

For more information about AEA's Topical Interest Groups, click here, or contact Ben directly at ben@eval.org for questions regarding your TIG website.

|

TechTalk - Tools for Successful Collaboration

| |

From LaMarcus Bolton, AEA Technology Director

Just a year and a half ago, at the Evaluation 2009 conference, I had the pleasure of meeting many within the evaluation blogging community at a roundtable session on blogging. Since then, evaluation-related blogging and tweeting seems to have grown at an unprecedented rate. Wanting to shed more light on the topic, I was recently afforded the opportunity to chat with a few of the evaluation blogging trailblazers with the hopes of gaining greater insight into the benefits of blogging within the evaluation community.

There are numerous benefits of blogging within the evaluation community. For example, blogs may increase transparency, which may subsequently improve trust, commitment, and accountability with stakeholders. Nonetheless, the primary emergent theme from my chats was that blogs are an excellent tool for knowledge dissemination and learning. David Fetterman of Fetterman & Associates (Empowerment Evaluation blog ) noted that blogs are, "...a simple and easy way to share insights, accomplishments, and even missteps with colleagues. They enable us to highlight our work, ask for assistance and critique, and learn from others internationally - almost instantaneously." Jara Dean-Coffey of jdcPartnerships shared a similar motivation for maintaining her To What End blog, adding that blogging allows her to, "...be a part of the larger conversation and to share my ideas, challenges, and experiences in the work." Moreover, sharing insights through blogs facilitates learning for its subscribers. E. Jane Davidson of Real Evaluation Ltd., who blogs with Patricia Rogers (Genuine Evaluation blog) explained, "Blogs can be a fantastic way to keep up with the cutting-edge thinking of key people as it happens - and to contribute to idea development - without having to wait for the ideas to appear in print." So, blogs seem pretty helpful to me!

If you wish to read more blogs, you may want to begin by checking out AEA's evaluator and evaluation blogs compilation page. Here, we have compiled many great evaluation-related blogs, most by AEA members. The majority offer subscription options, which are normally via email and/or RSS alerts. However, if you plan to be an active subscriber who contributes to the knowledge sharing, just be sure to keep in mind blog comment etiquette! Or, if you wish to take the plunge and start your own evaluation blog (we encourage you to!), there are a variety of resources available to get you started - from choosing the blogging platform/service to general tips to get started writing.

Do you have other blogs or blog resources to share? We'd love to hear more! Shoot me an email at marcus@eval.org.

The opinions above are my own and do not represent the official position of AEA nor are they an endorsement by the association.

|

Evaluation: Seeking Truth or Power?

| |

AEA members Pearl Eliadis, Jan-Eric Furubo and Steve Jacob are editors of Evaluation: Seeking Truth or Power?, a new book released by Transaction Publishers. AEA members Pearl Eliadis, Jan-Eric Furubo and Steve Jacob are editors of Evaluation: Seeking Truth or Power?, a new book released by Transaction Publishers.

From the Publisher's Site:

"Evaluation has come of age. Today most social and political observers would have difficulty imagining a society where evaluation is not a fixture of daily life, from individual programs to local authorities to parliamentary committees. While university researchers, grant makers and public servants may think there are too many types of evaluation, rankings and reviews, evaluation is nonetheless viewed positively by the public. It is perceived as a tool for improvement and evaluators are seen as dedicated to using their knowledge for the benefit of society.

The book examines the degree to which evaluators seek power for their own interests. This perspective is based on a simple assumption: If you are in possession of an asset that can give you power, why not use it for your own interests? Can we really trust evaluation to be a force for the good? To what degree can we talk about self-interest in evaluation, and is this self-interest something that contradicts other interests such as "the benefit of society?" Such questions and others are addressed in this brilliant, innovative, international collection of pioneering contributions."

From the Editors:

"The trigger of this book was the fact that evaluation itself has become a very important ingredient in public life. Extensive resources are spent on evaluation. So, it is time to see evaluation as a powerful player in its own right. The book results from a collective reflection of the members of the International Evaluation Research Group (INTEVAL) animated by Ray C. Rist. It's a comparative endeavour that involves 13 authors from eight countries in North America and Europe with different academic backgrounds. By compiling national and international experiences, this book shows that the issue of power in evaluation resonates globally and highlights cases where power relationships play a decisive role in the evaluation process from the beginning (e.g., definition of the mandate, drafting of requests for proposals) to end (e.g., use and misuse of evaluation results).

About the Editors:

Pearl Eliadis is human rights lawyer, author and lecturer, and principal of her Montreal law firm. Trained at McGill and Oxford universities, she is admitted to the Quebec and Ontario Bars and teaches Civil Liberties at McGill's Law Faculty.

Jan-Eric Furubo has published many works on evaluation methodology, the role of evaluation in democratic decision making processes and its relation to budgeting and auditing.

Steve Jacob has been a professor in the Department of Political Science at Laval University since 2004. He was the director of the Master in Public Affairs program (2006-2010) and president of the Interfaculty Research Ethics Committee (2006-2010). He is the director of PerƒEval, the research laboratory on public policy performance and evaluation at Laval University.

Go to the Publisher's Site

|

Final Call for SAMEA Conference September 5-9 in Johannesburg

| |

The South African Monitoring and Evaluation Association (SAMEA) will host its third biennial conference September 5-9 in Johannesburg. The South African Monitoring and Evaluation Association (SAMEA) will host its third biennial conference September 5-9 in Johannesburg.

This year's theme is M&E 4 Outcomes: Answering the 'So What' Question. This year's keynote speaker is David Fetterman, Director of Evaluation at Stanford University.

In addition to onsite sessions, a virtual symposium will be hosted on the Wits Programme Evaluation Group website. A final call for proposals is underway and can be viewed online at the SAMEA website. For more information or to submit proposals, contact brabie@sun.ac.za and info@samea.org.za.

|

Data Den - Variation Across Participant Groups

|

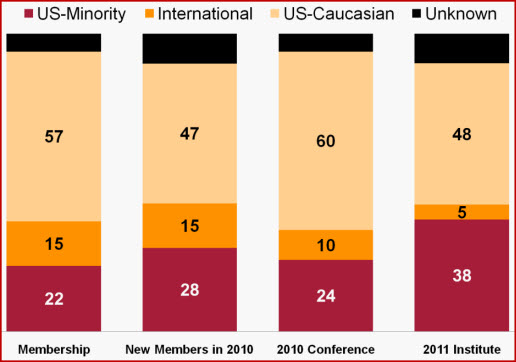

From Susan Kistler, AEA Executive DirectorThis month's offering from the Data Den? We're just back from the Summer Institute and for the first time collected data on ethnicity from the breadth of our Institute attendees. A recent AEA Task Force recommended expanding the association's capacity to document and track its progress in the area of minority representation and we're striving to improve our data collection and reporting. Since over half of those at the Institute are not members, and historically we haven't collected demographic data from nonmembers, we had some work to do. One challenge encountered was that often for the government attendees, a large fraction of the nonmembers in attendance, an administrator completes the registration on behalf of the attendee (and often does not fill out background information questions). Through follow-up with registrants, we confirmed basic demographic information from over 90% of those in attendance and gained a better understanding of how the Institute attendees are alike and vary from our overall membership, new members, and conference attendees.

|

|

New Jobs & RFPs from AEA's Career Center

| |

What's new this month in the AEA Online Career Center? The following positions and Requests for Proposals (RFPs) have been added recently:

- Program Officer at Transparency & Accountability Initiative (London, UNITED KINGDOM)

- Senior Research Associate at Synergy Enterprises Inc. (Silver Spring, MD, USA)

- Chief of Programs at Youth UpRising (Oakland, CA, USA)

- Evaluator at Green River Regional Educational Cooperative (Bowling Green, KY, USA)

- Monitoring and Evaluation Director at The QED Group (Kandahar, AFGHANISTAN)

- Internal Program Evaluator at Wright State University (Dayton, OH, USA)

- Research Program Evaluator at Susan G Komen for the Cure (Dallas, TX, USA)

- Project Director for National Evaluation of Social Innovation Fund at Duke University, Fuqua School of Business (Durham, NC, USA)

- Research Assistant Professor at University of Missouri, St Louis (St. Louis, MO, USA)

- Program Officer for Evaluation at Fetzer Institute (Kalamazoo, MI, USA)

Descriptions for each of these positions, and many others, are available in AEA's Online Career Center. According to Google analytics, the Career Center received approximately 6,300 unique page views in the past month. It is an outstanding resource for posting your resume or position, or for finding your next employer, contractor or employee. Job hunting? You can also sign up to receive notifications of new position postings via email or RSS feed.

|

| About Us |

| The American Evaluation Association is an international professional association of evaluators devoted to the application and exploration of evaluation in all its forms.

The American Evaluation Association's mission is to:

- Improve evaluation practices and methods

- Increase evaluation use

- Promote evaluation as a profession and

- Support the contribution of evaluation to the generation of theory and knowledge about effective human action.

phone: 1-508-748-3326 or 1-888-232-2275

| |

|

|

|

|

|

|

|